Choi, Lee, Chung, Park, Park, Sohn, and Lee: Directed Graph-based Refinement of Three-dimensional Human Motion Data Using Spatial-temporal Information

Abstract

With recent advances in computer science, there is an increasing need to convert human motion to digital data for human body research. Skeleton motion data comprise human poses represented via joint angles or joint positions for each frame of captured motion. Three-dimensional (3D) skeleton motion data are widely used in various applications, such as virtual reality, robotics, and action recognition. However, they are often noisy and incomplete because of calibration errors, sensor noise, poor sensor resolution, and occlusion due to clothing. Data-driven models have been proposed to denoise and fill incomplete 3D skeleton motion data. However, they ignore the kinematic dependencies between joints and bones, which can act as noise in determining a marker position. Inspired by a directed graph neural network, we propose a novel model to fill and denoise the markers. This model can directly extract spatial information by creating bone data from joint data and temporal information from the long short-term memory layer. In addition, the proposed model can learn the connectivity between joints via an adaptive graph. On evaluation, the proposed model showed good refinement performance for unseen data with a different type of noise level and missing data in the learning process.

Keywords: Machine learning · Human information processing · Signal processing

1 Introduction

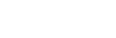

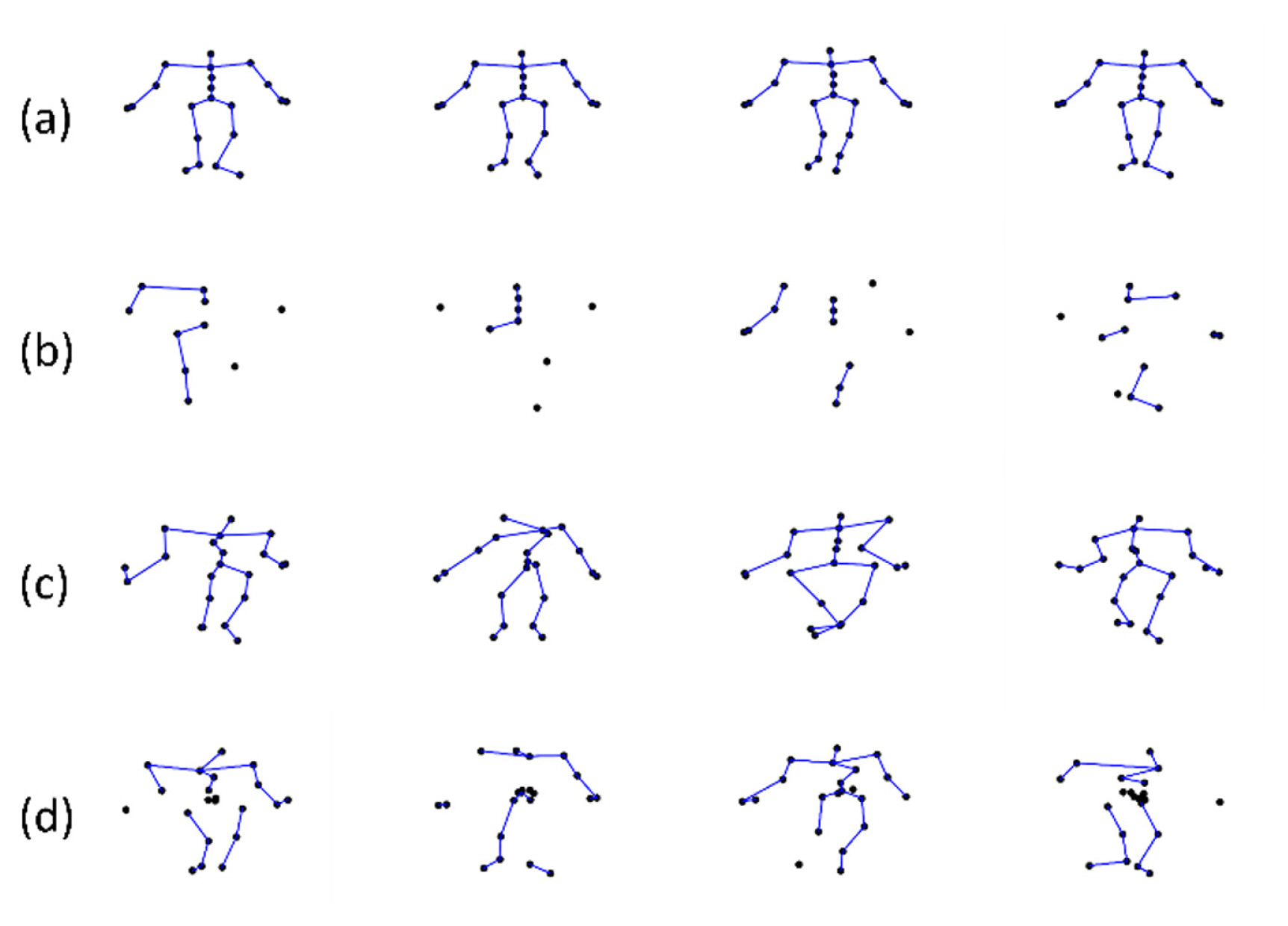

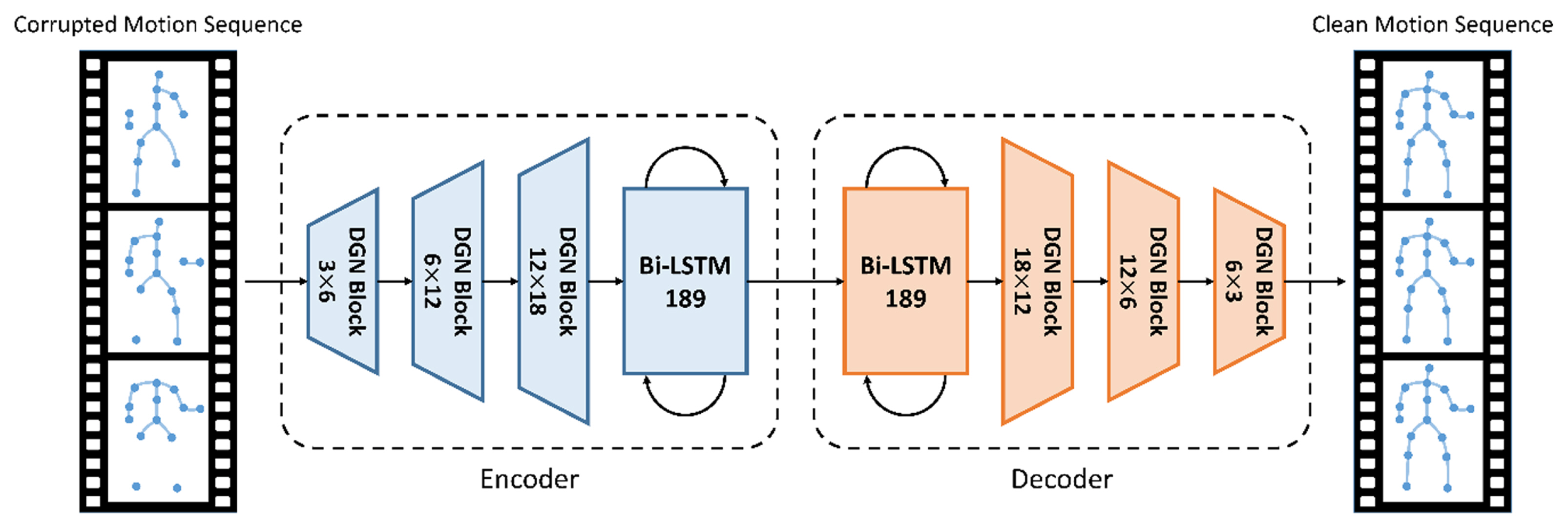

With recent advances in computer science, there is an increasing need to convert human motion to digital data to research the behavior of the human body, such as gait analysis [ 1– 3]. A motion capture system has been used to measure human or animal movement as sequential motion data [ 4]. Skeleton motion data generated using such a system comprise human poses represented via joint angles or positions for each frame [ 5]. Three-dimensional (3D) skeleton motion data are widely used in several applications, such as human-computer interactions [ 6], virtual reality [ 7], robotics [ 8– 10], movie production [ 11], and action recognition [ 12– 16]. Motion capture methods are broadly categorized into two: marker-based and markerless methods. Professional marker-based sensors, such as VICON [ 17] and Xsens [ 18], can capture motion data with high precision; however, they are expensive and only available in certain places. Markerless sensors, such as Microsoft Kinect can capture motion data at a low cost but the captured motion data have low precision and noise. The common issues with 3D skeleton motion data are that they are often noisy and incomplete due to calibration error, sensor noise, poor sensor resolution, and occlusion caused by clothing on body parts [ 19]. Fig. 1 shows types of incomplete data. For effective data usage, data refinement should be performed beforehand [ 20– 22]. Processing packages for refinement are provided in commercial motion capture systems, such as VICON [ 17], but they require manual user editing, which is labor- and time-intensive and results in unnatural movement because the kinematic information is not considered [ 11, 20, 21]. Therefore, several scholars have focused on motion data refinement. The data refinement methods can be broadly categorized into nondate-driven and data-driven methods. Data-driven methods use a large amount of data to extract spatio-temporal patterns inherent in motion data. Notably, classical data-driven methods are action-specific; multiple actions could not be handled in a single model, and each action had to be trained separately [ 23, 24]. Recently, deep learning methods—widely used data-driven methods—have succeeded in computer vision, image processing, pattern recognition, natural language processing, and computer graphics [ 25– 28]. With advances in deep learning (DL) techniques, DL has also shown good results in the fields of motion data processing and generation [ 29– 33]. Holden et al. [ 29, 30] used a convolutional autoencoder model to encode human motion data into a low-dimensional manifold and proved that the model could decode them back into clean motion data. Although the model is nonaction-specific, the convolutional and pooling layers cause a jittery effect on the refined motion sequences [ 31]. Inspired by [ 29, 30], Li [ 5] proposed an autoencoder model comprising a fully connected layer (FCL) and a bidirectional long short-term memory (LSTM) recurrent neural network (NN) [ 34, 35] that can express sequence data better than a convolutional NN (CNN). The model imposes bone length loss and smooth loss in the learning process, thereby minimizing the jittery effect; it could generate data as a natural motion. Notably, previous studies using FCL [ 5, 19, 36] and CNN [ 29, 30] ignore the kinematic dependencies between joints and bones. Particularly, an FCL can act as noise in determining a missing marker’s position. For example, the shoulder joint is irrelevant in predicting the missing marker at the knee ( Fig. 2(a)). It is more reasonable to predict the missing joint at the knee using the joints in the temporally surrounding frames and the spatially around the knee ( Fig. 2(b)). In the field of human action recognition, many scholars have represented skeleton motion data as a graph with joints as nodes and bones as edges with prior knowledge of the local connection of human joints [ 14– 16]. They have proven that bone information, which presents the direction and length of bones, is complementary to joint information and is a good modality for representing human motions. In addition, Lei [ 15] represented skeleton motion data as a directed acyclic graph and trained its connection adaptively. Inspired by [ 5] and [ 15], we propose a novel model that fills and denoises skeleton motion data using the information on relevant joints by representing the skeleton motion data as a directed acyclic graph. Particularly, we propose a novel graph autoencoder model that combines a directed graph network (DGN) block proposed in human action recognition [ 15] with a data refinement model [ 5] to refine incomplete 3D human motion data. The proposed model can not only directly use spatial information, which was included indirectly in previous studies by bone length loss, creating bone data from joint data, but can also handle the temporal information from the LSTM layer. Moreover, the proposed model can learn the dependency between joints from data via adaptive graph by putting joint and bone data into the network. The major contributions of this study are as follows.

1. To the best of our knowledge, this is the first time a directed acyclic GNN is applied to motion data refinement considering both spatial and temporal information. In addition, we demonstrate that it is highly effective in representing human motion data, even for refinement. 2. The proposed model is robust because it uses neighboring joints to predict missing joints, whereas other networks can be affected by irrelevant joints with severe noise or frequently missing joints. 3. The proposed model applies not only to various types of unseen data but also to input that has not been processed (e.g., rotation). Meanwhile, the previous models proceeded with data preprocessing for translation and rotation. There processes require the assumption that a particular joint must be measured, making it difficult to generalize many cases and time-consuming to preprocess. Because the proposed model considers the joint kinematic structure, it works well just by proceeding with data translation alone and can be generalized to various data. 4. On the CMU mocap dataset [37], the proposed model exceeded the state-of-the-art performance for 3D skeleton motion data refinement using three types of losses.

2 Related Works

Incomplete data are due to the equipment noise and occlusion when measuring motion data. Thus, prior works eliminated noise from human data and predicted the positions of missing markers. The core of human data refinement is extracting and preserving the body’s intrinsic spatial-temporal pattern. As aforementioned, human data refinement methods can be broadly categorized into non-data-driven and data-driven methods.

2.1 Non-data-driven Methods

Classical data refinement methods use a linear time-invariant filter to remove noise. Brunderlin [ 38] proposed a multiresolution filter for motion data filtering in which the techniques used in the image and signal processing domain can also be applied to animated motion. Lee [ 39] proposed a computationally efficient smoothing technique to apply a filter mask to orientation data. However, these filter-based methods generate unnatural motion data because each joint is handled independently, which is the reason such methods could not use spatial information. In subsequent studies, the linear dynamical system and Kalman filter theories were used to learn motion kinematic information. Shin [ 40] removed motion capture’s noise using Kalman filtering by mapping the performer’s movements to the animated character in real time. Li [ 41] proposed a DunaMMo model that comprised a Kalman filter and learns latent variables to fill missing data, whereas Lai [ 42] effectively reconstructed human data using the low-rank matrix completion theory and algorithm based on the relevance of the low-rank properties of representing human motion. In addition, Burke [ 43] refined missing markers by combining the Kalman filter and the low-rank matrix completion.

2.2 Data-driven Methods

Data-driven motion refinement methods have attracted considerable attention because the available amount of data has increased due to recent improvements in computer performance. Classical data-driven methods learn filter bases from motion data. For instance, Lou [ 23] proposed a model that learned a series of spatio-temporal filter bases from precaptured data to eliminate noise and outliers. Akhter [ 44] proposed a bilinear spatio-temporal basis that simultaneously exploits spatial and temporal regularity while maintaining generalization performance for new data, performing gap-filling, and denoising motion data. Sparse representation has recently been widely used for denoising motion data. Xiao [ 45] assumed that incomplete poses could be represented as linear combinations of several poses and considered the missing marker problem in sparse representation. In addition, Xiao [ 24] eliminated motion data noise by partitioning the human body into five to gain a fine-grained pose representation. Xia [ 46] combined statistical and kinematic information and restored motion by imposing smoothness and bone length constraints. However, these methods were not generalized for any action type, requiring data to be learned for each action, and were not practically suitable for use. With the recent rapid development of DL, DL is also widely used in motion data refinement. Holden [ 30] learned various human motion data using a convolutional autoencoder and interpolated them, whereas Mall [ 19] proposed a deep, bidirectional, recurrent framework to clean noisy and incomplete motion capture data. Li [ 5] proposed a bidirectional recurrent autoencoder (BRA) model and refined human motion data imposing kinematic constraints. In addition, Li [ 36] used a perceptual autoencoder to improve the bone length consistency and smoothness of refined motion data. Previous studies indirectly extract kinematic information from human data by constraint. However, models that do not consider human structures do not maintain the kinematic information of human data. For example, an FCL uses the ankle joint, which does not significantly affect the shoulder joint prediction of the human body. In human action recognition—a representative research field using human data—many scholars have demonstrated that it is effective to represent human data in a directed graph for extracting bone information from human data. For example, Shi [ 15] classified various human behaviors by representing human data in an adaptive directed graph. Imbued by the aforementioned studies, we propose a model that can directly extract kinematic information from human data by representing them in an adaptive directed graph.

3 Method

Skeleton-based motion data comprise frame sequences, which are represented by 3D coordinates of joints. However, raw 3D skeleton motion data are often in-complete during the capturing process because of occlusion and noise, and they need to be refined before usage. To effectively refine incomplete data, we applied a DGN to a refinement model, resulting in robustness on rotation and deformation.

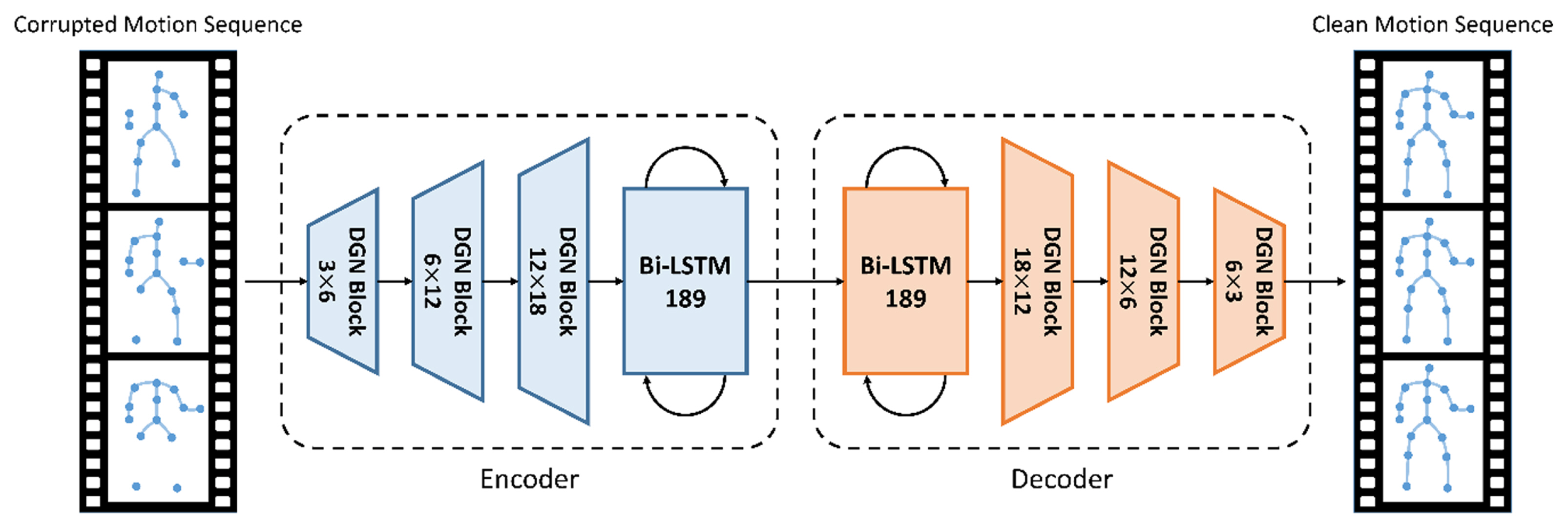

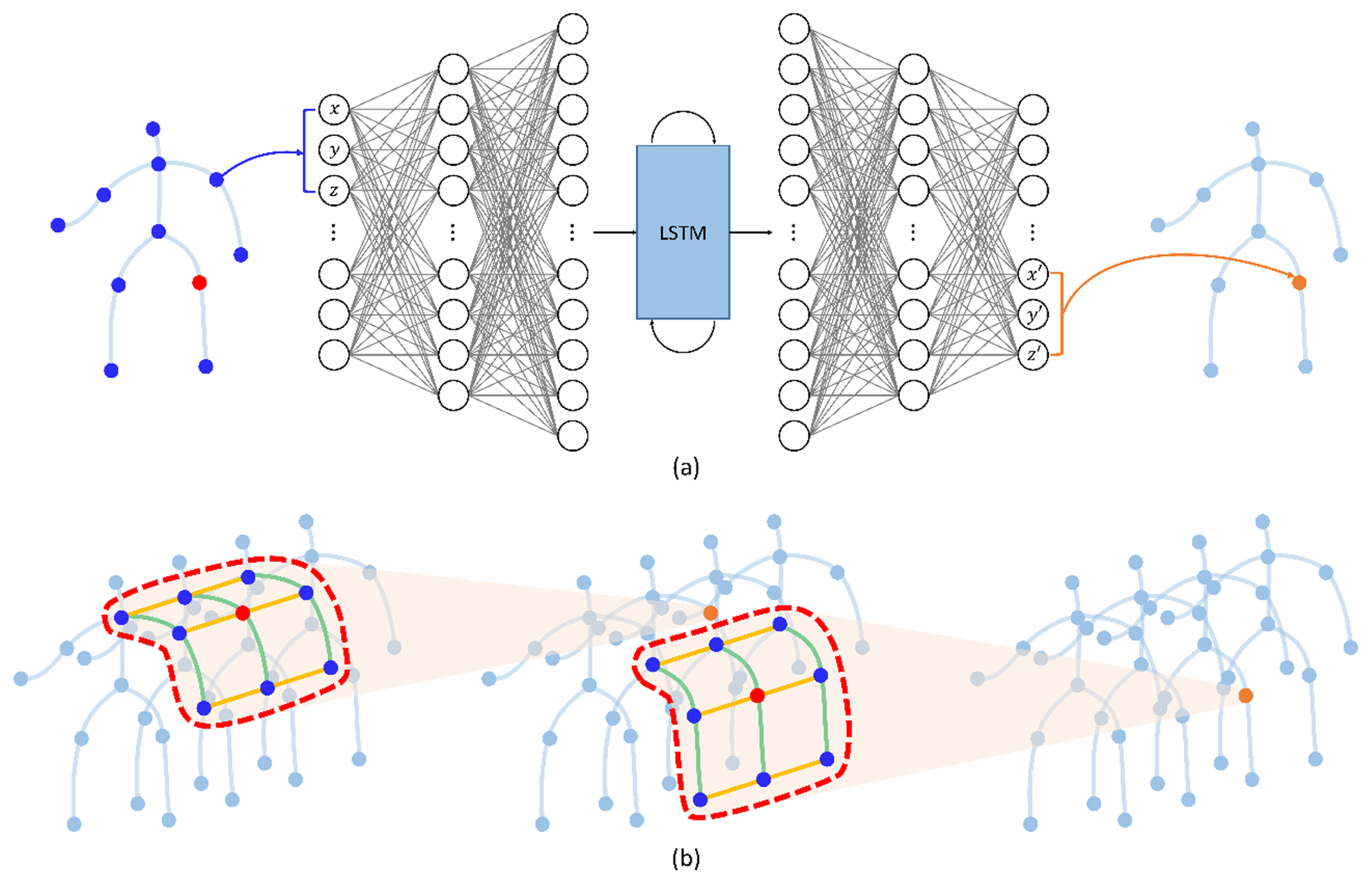

Fig. 3 illustrates a BRA with DGN block (BRA DGN) architecture proposed in this study. The architecture comprises the encoder that projects the input motion sequences to a hidden unit space and the decoder that projects it back to clean motion sequences. Each of the encoder and decoder contains a bidirectional LSTM to extract temporal information. This architecture, which changed an FCL in [ 15] to a DGN block, can extract spatial and temporal information by skeleton sequences into a graph structure.

We build bone data with joint data and represent the skeleton as an acyclic directed graph by expressing joints as nodes and bones as edges. Because we represent skeleton motion data as a directed graph, the DGN block can extract information using dependencies between joints and bones. A graph containing the attributes of nodes and edges enters several DGN blocks as input, resulting in graphs of the same structure as output. In each DGN block, the attributes of the nodes and edges are updated by their adjacent edges and nodes. The DGN blocks on the bottom layers extract the local information of nodes and edges while updating the attributes; the attributes of nodes and edges are aggregated and updated in a wider range on the top layers. Thus, as a graph enters the upper DGN block, it accumulates information from wider joints and nodes for refinement. Although this concept is similar to the principle of CNNs, the DGN block is designed for a directed acyclic graph. In this section, we describe the proposed model.

3.1 Notation

First, we describe the notations of skeleton motion data used in this article. Let M = [m1, m2, ..., mN] be a clean motion dataset with (N, C, T, V) shape, where N is the number of data, C is x, y, and z channel, T is the time sequence, and V is the number of joints. The mn = [p1, p2, ..., pT] that constitutes M is a sequence motion data with (C, T, V) shape, where pt = [x1, y1, z1, ..., xj, yj, zj] with (C, V) shape represents a pose data at a time in the temporal direction. We represent clean motion data as Y and missing data deformed from the clean motion data as X.

3.2 Bone Information

Studies of human action recognition [ 14– 16] have proven that bone information is essential to represent human motion data. Bone information that represents the directions and lengths of bones is crucial because the position of each joint (bone) is determined by its connected bones (joints), and they are strongly coupled. In the motion refinement task, the bone length information is also crucial in that the length of the surrounding bone allows for accurate spatial prediction of missing markers. However, in previous studies [ 5, 36, 46, 47], bone information has been indirectly used as a constraint in loss terms without extracting it from the data. Not limited to human action recognition, we used bone data to refine human motion by putting it in the proposed model’s input with the expectation of a better representation of the human skeleton because a better representation of the human skeleton yields better performance on the refinement of missing markers. The bone information is defined by the difference between two connected joint coordinates. If two joint positions of v1 = (x1, y1, z1) and v2 = (x2, y2, z2) are given, where v2 is closer to the root joint than v1, the bone information of v1 and v2 is the difference between the two joint coordinates, i.e., ev1,v2 = (x1–x2, y1–y2, z1–z2).

3.3 Graph Construction

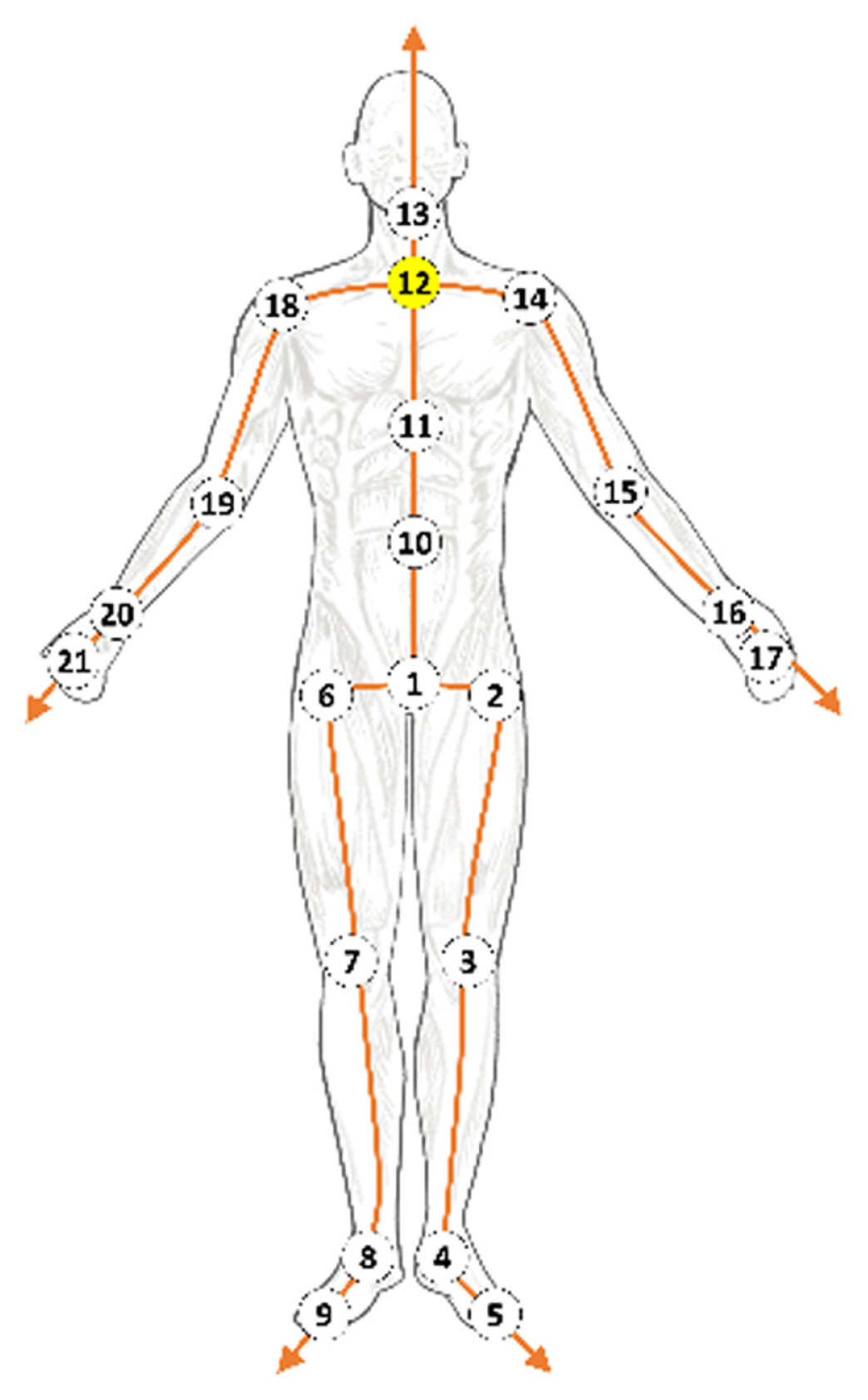

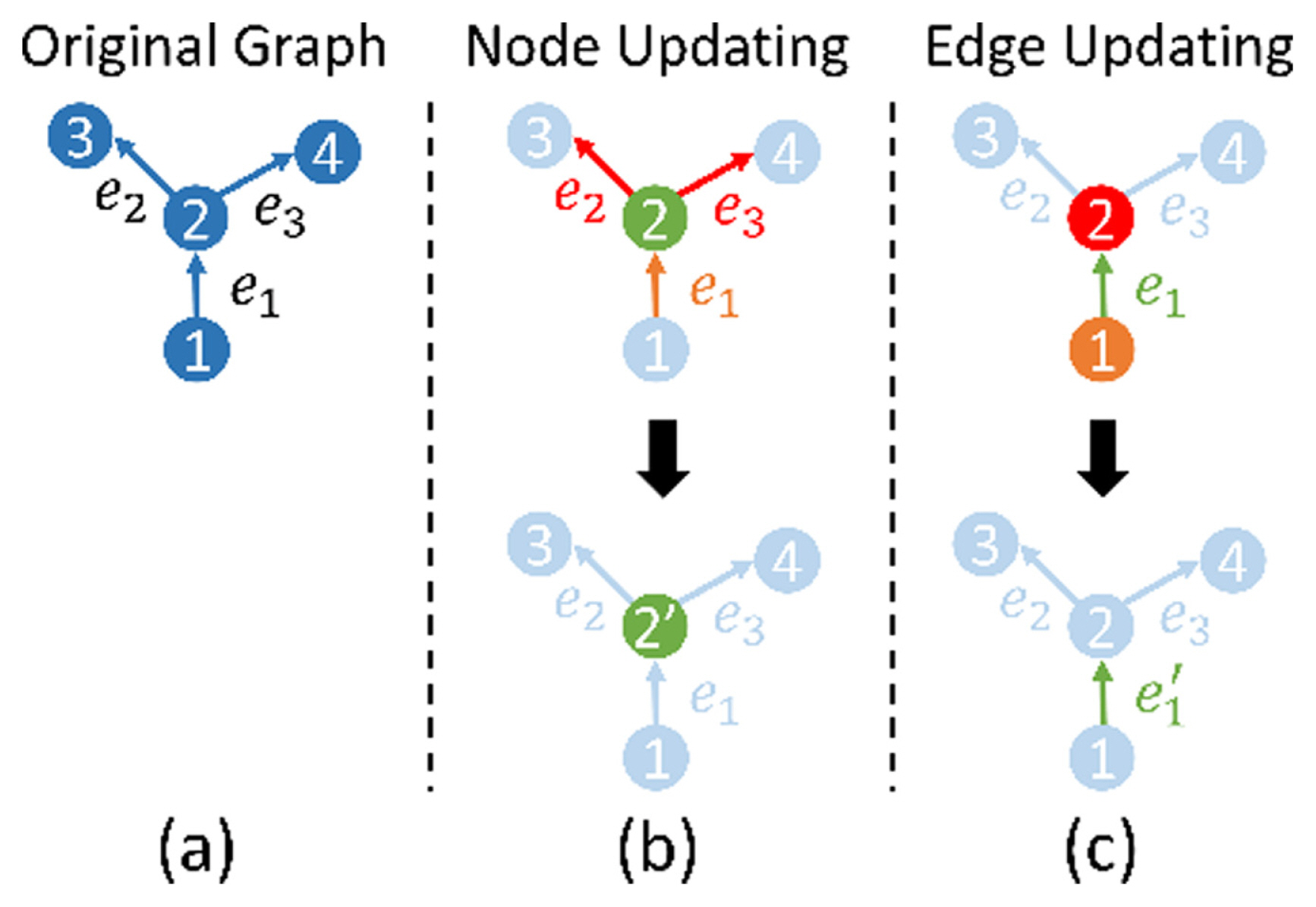

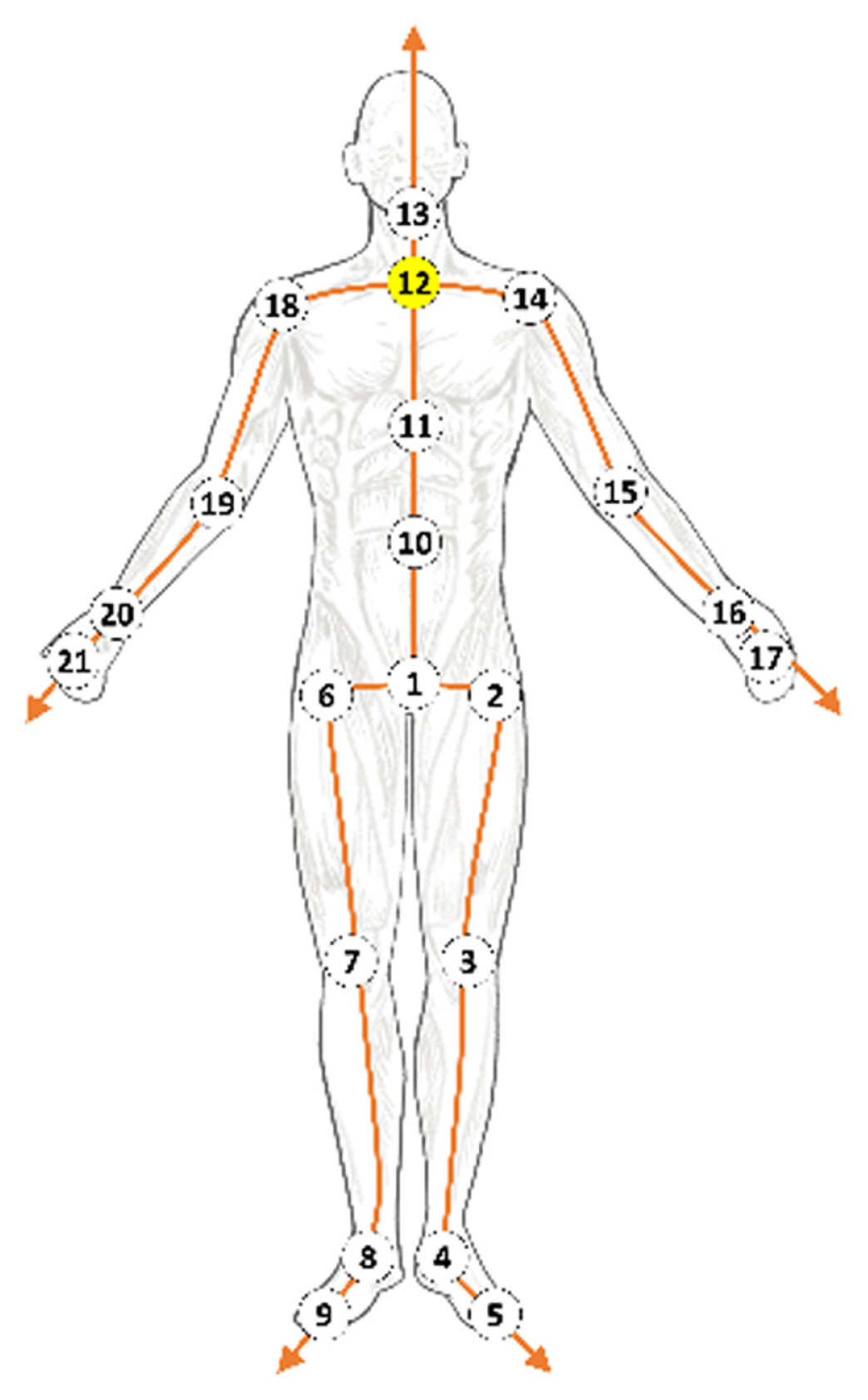

Li [ 5] and Holden [ 29, 30] refined a motion sequence using CNN or FCL. Their methods ignored kinematic dependencies between joints and bones because there was no means to preserve or extract spatial information on their networks. We represent skeleton motion data as a directed acyclic graph by expressing joints as nodes and bones as edges ( Fig. 4), similar to [ 15]. It is ideal to represent human motion data with a directed graph because the movement of each joint is physically controlled by adjacent joints, which are closer to the center. For example, the position of the wrist is determined by those of the shoulder and arm, which are closer to the root joint than that of the wrist. We set joint 12 as the root node and define the direction of bone to direct the joint farther from the center. In graph theory, an adjacency matrix represents the connection relationship between the nodes in an undirected graph. It is represented by 1 if the two nodes are connected and 0 otherwise. Although the matrix can indicate connectivity between nodes, it cannot indicate directions in an undirected graph. Therefore, to represent a human body as a directed graph, an incidence matrix is used to indicate the node directions. The directed graph in Fig. 5a is represented by an incidence matrix as follows: where A denotes the incidence matrix, and T denotes the transpose operation. Unlike the adjacent matrix, the rows and columns of the incidence matrix represent the indexes of the edges and nodes, respectively. The number “1” in the element of matrixes indicates the target node, which represents the incoming edges heading to a node, whereas the number “−1” in the element of matrixes indicates the source node, which denotes the outcoming edges emitting from a node. For example, the element at the second row and third column of the incidence matrix is 1 because e 2 is heading to v3 and the element at the third row and second column of the incidence matrix is −1 because e3 is emitting from v2 ( Fig. 5(a)). To separate the source nodes from target nodes in the incidence matrix, we use A3 to denote the source nodes; it only contains the absolute value of the incidence matrix elements that are −1. Similarly, we use At to denote the target nodes; it only contains the absolute value of the incidence matrix elements that are 1. We set A3 and At as learnable parameters to form an adaptive body structure.

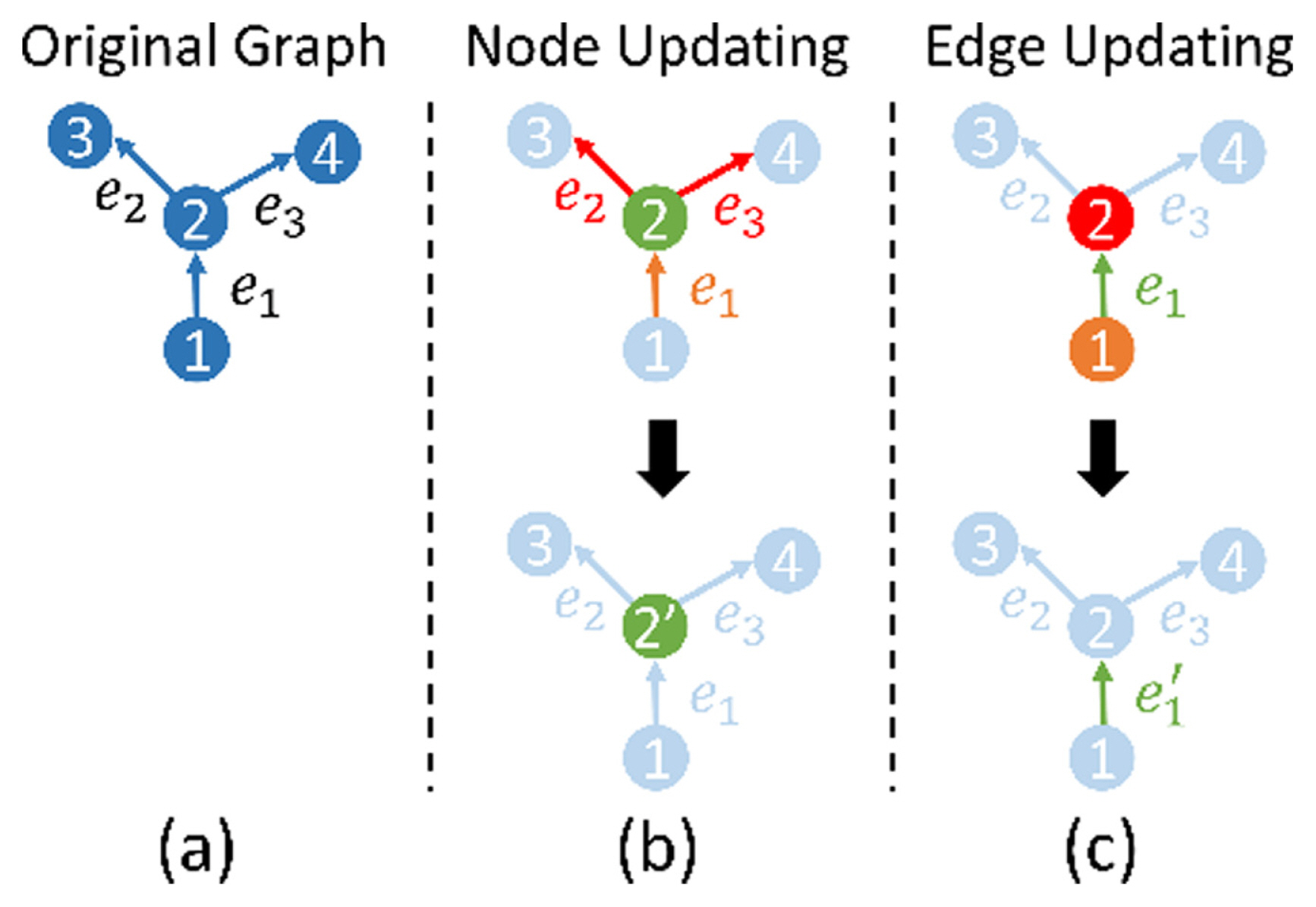

3.4 DGN Block

The DGN block is the main block of the proposed model, which is the basic block for a directed GNN [ 15]. This block extracts features by collecting information from connected peripheral nodes and edges in the spatial structure of the skeleton motion data. Because the BRA has limitations in extracting spatial information, we replaced the FCL with the DGN block. Figs. 5(b) and 5(c) shows how the nodes and edges are updated in the original graph by the DGN block. The DGN block performs two main processes: (1) aggregates the attributes and (2) updates the nodes and edges. The equations for aggregating and updating the input data are, respectively, as follows: where Hv and He denotes the update functions of node and edge, respectively, and [·] denotes the stack operation of matrixes.

The incidence matrix is used to aggregate the nodes and edges based on their connected edges and nodes. In Equation (4), feAsT and feAtT denote the aggregations of the attributes contained in incoming edges and outgoing edges, respectively. The node update function, Hv, updates the attributes of a node by combining a particular node with incoming and outgoing edges and gives the output fv’. Similarly, in Equation (5), feA s and feA t denote the aggregations of attributes contained in the source and target nodes, respectively. The edge update function, H e, updates the attributes of an edge by combining a particular edge with a source node and a target node and gives the output fe’.

4 Network Training

The proposed model is trained by minimizing three losses: position, bone length, and smooth losses. The total loss function is defined as

where λ1, λ2, and λ3 denote the weight coefficients of the three losses. Above all, it is crucial to minimize the position loss because it ensures the refined motion sequence has a smaller Euclidean distance from the clean motion sequence than the other losses do for generating natural motion. Hence, the proposed model is trained in three stages via changing the weight coefficients. Table 1 describes the parameters used in each stage. The parameters of the incidence matrix are fixed until other parameters are learned to some extent at the first stage to maintain prior knowledge of the human body’s kinematic structure. The batch size is set to be 16, and Adam [ 48], an adaptive gradient descent algorithm, is used to optimize the parameters.

4.1 Loss Function

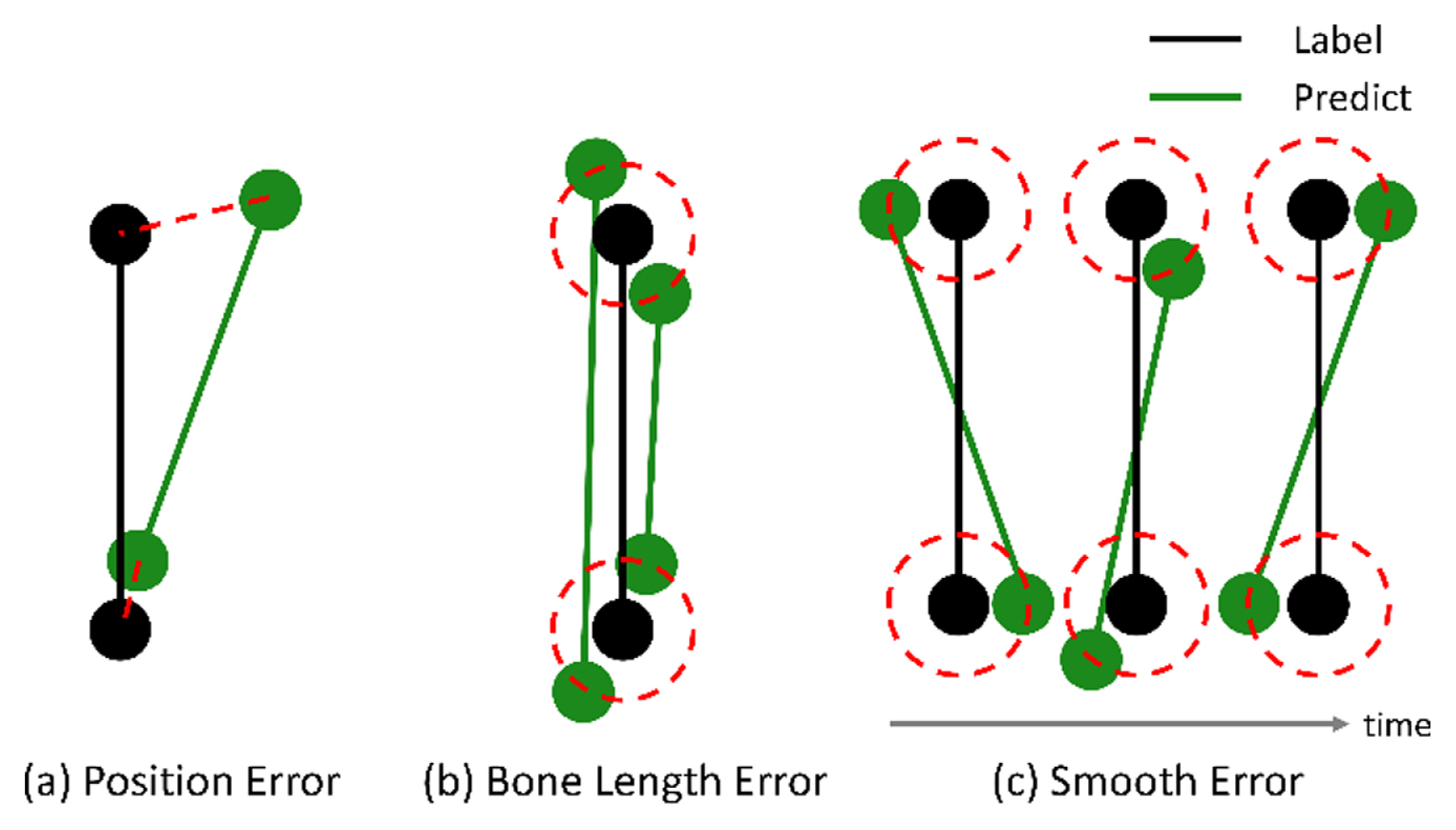

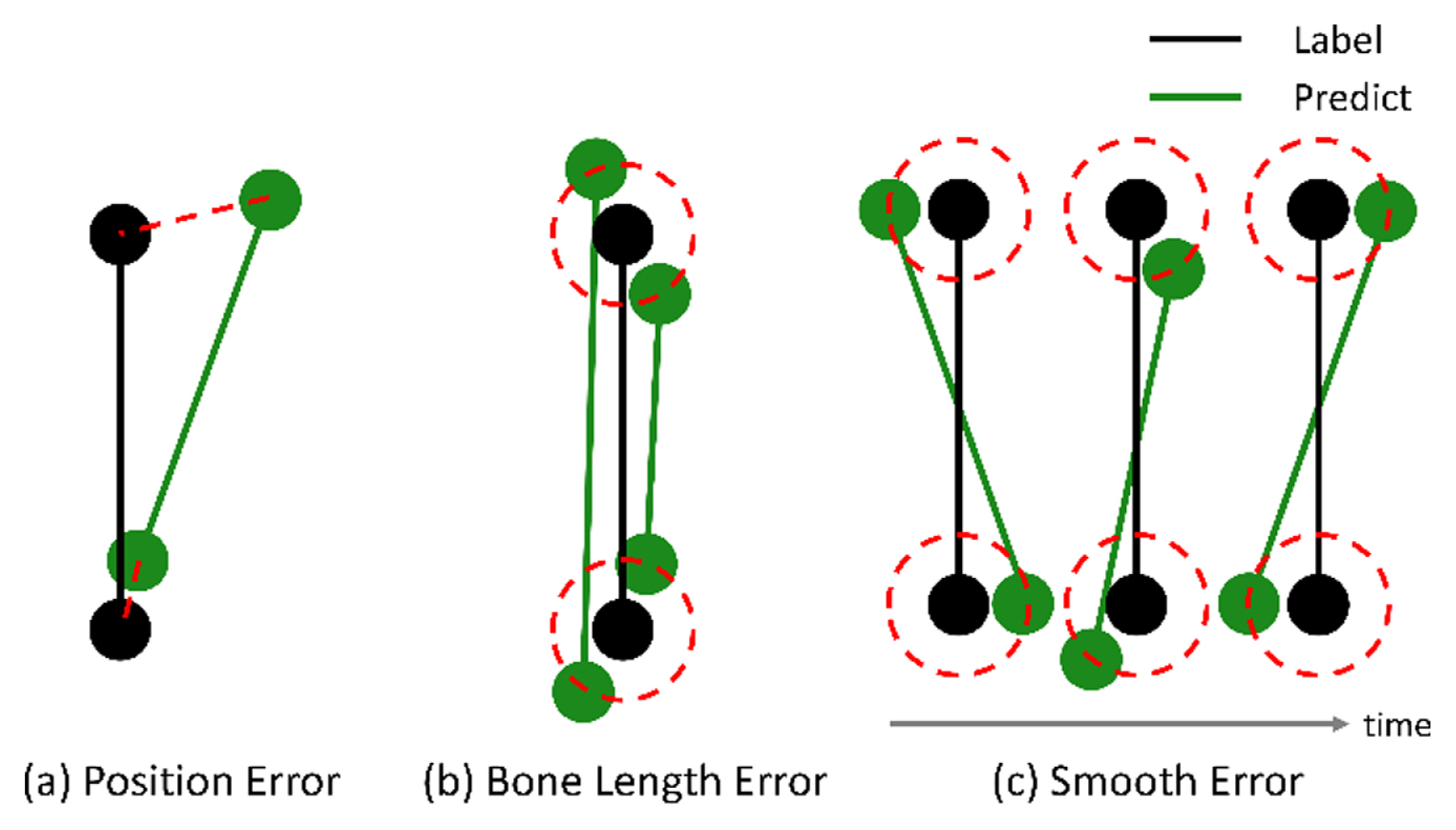

4.1.1 Position Loss

The most commonly used loss function for data refinement is L2 loss between the network output and ground truth. We define this loss as position loss, which is the most critical loss regarding the distance between the predicted and actual joint positions. Fig. 6a shows the predicted and actual joint positions when the predicted position is inaccurate. Position loss is formulated as follows, where ||·|| 2 denotes the L2 norm. According to the results of the previous studies that used only position loss, the output motion sequence remains jittery [ 29, 30]. Meanwhile, in [ 5, 30, 36], which are the most closely related studies to this study, the bone length and smooth losses were used to remove the jittery effect.

4.1.2 Bone Length Loss

Bone length constraints have been used in many studies [ 5, 36, 46] to extract kinematic and statistical characteristics by putting prior knowledge of bone length into the model. In Fig. 6(b), although the two predicted results (green dots) differ in the prediction of joint position, the position losses of both results are the same because the distances between the label (in the red circle) and both results are the same. For the natural motion sequences, the bone length loss is needed to keep the bone length constant. The bone length loss is defined as where Lb denotes the bone length of clean data, and lb denotes the predicted value of the joint coordinates between the two ends of the bone, calculated with the L2 norm.

4.1.3 Smooth Loss

The joint can be jittery in the temporal direction, whereas the position and bone length losses are the same ( Fig. 6(c)). Because the motion of the human body should be smooth in the temporal direction, non-data-driven methods impose smoothness to refine the natural motion sequences by enforcing C 2 continuity on each feature dimension via a smoothness penalty term [ 49– 51]. Recently, Li [ 5, 36] added a smoothness constraint at the training phase to train a DL model. Let O be a symmetric tridiagonal matrix, defined by Then, the smooth loss is defined by

where X’, represented by (T+2)×(C×V), is derived from X by repeating the border elements, i.e., p0 = p1 and pT = pT+1.

5 Experiment and Results

5.1 Generating Incomplete Data

We use the CMU Motion Capture Database [ 37]. With 31 markers attached to actors, the motion data were captured with an optical motion capture system. These data were converted from the joint angle representation in the original dataset to the 3D joint position, subsampled to 60 frames per second, and separated into overlapping windows of 64 frames (overlapped by 32 frames). Only 21 of the most relevant joints were preserved ( Fig. 4). The proposed model applies to not only data from 64 frames but also data from other various frames. Notably, the location and direction of skeleton motion data in the human motion data can change with the time they are captured. Similar to previous studies [ 5, 30], not only the global translation is removed by subtracting the root joint position from the original data but also the global rotation around the Y-axis is removed. Finally, we subtracted the mean pose from the original data and divided the absolute maximum value in each coordinate direction to normalize the data into [−1, 1]. This preprocessing process helps a particular joint to exist in stochastically similar locations, making it easier for the model to predict. The purpose of this study is to generate clean data from corrupted data with occlusion or noise. Because CMU mocap data are clean data, we have to create corrupted data by making missing markers or adding noise to train the model. As human data are measured with a single measuring device, the types of noises within an entire data can be considered the same. Therefore, we created and then added the same type of white noise to the position values of all joints.

5.2 Comparisons with Baselines

In this section, we compare the performances of the proposed model (BRA DGN), CNN [ 29, 30], BRA [ 5], two encoder-bidirectional-decoder (EBD), and EBD DGN. Although the last two models have the same architecture as BRA and BRA DGN, they do not impose smoothness and bone length constraints during the training phase. We compared these models with the following three experiments to evaluate the performance of the proposed model. All experiments were conducted on a workstation equipped with an Intel Core i9-10900X CPU and RTX 3090. Missing marker reconstruction. It is about how well a model can predict a missing marker without noise. This experiment demonstrates the reconstruction performance of the model—this case borders on marker-based with little noise.

Missing marker reconstruction with noise. We evaluate the refinement performance on data with noise and missing markers coexisting together. It is close to a markerless setup where noise and missing occur a lot.

Missing marker reconstruction without standardizing. Standardizing skeleton motion data is intended to improve the model’s predictive performance by helping the joint stay within a certain range. However, because the DGN block predicts missing markers using the positions of the surrounding joints, it will predict missing markers well without standardizing. Therefore, we evaluate the reconstruction performance without standardizing.

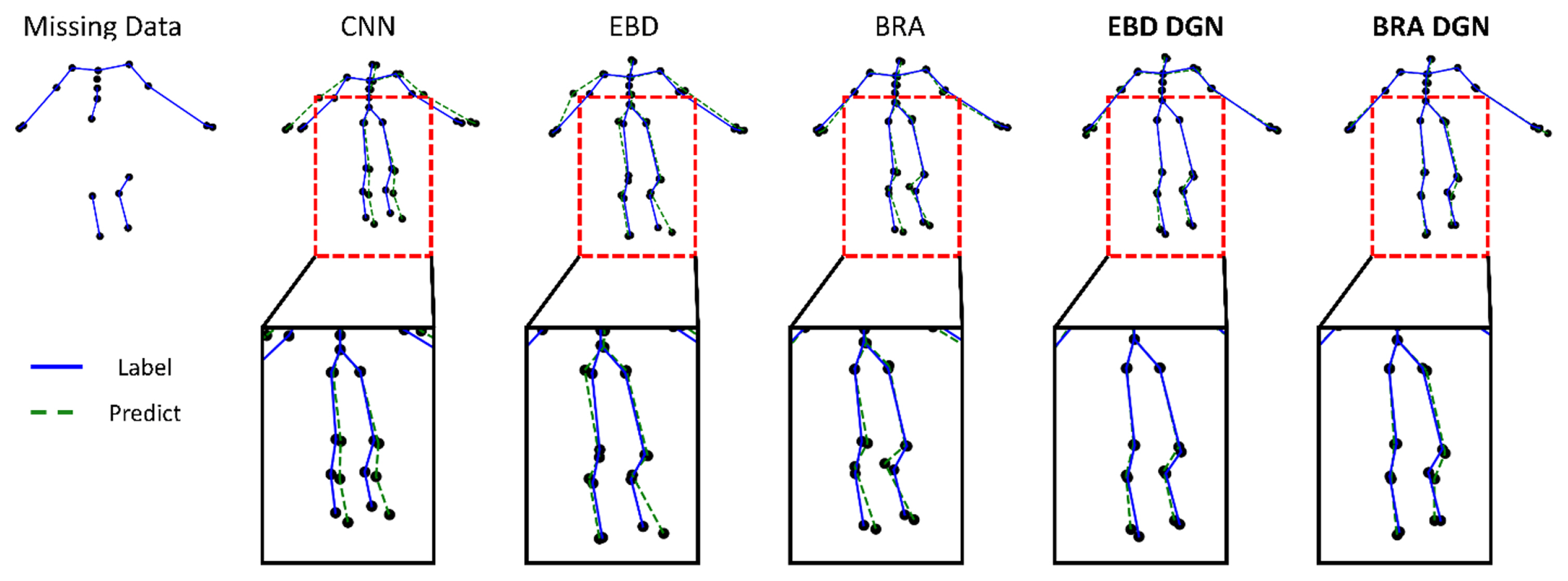

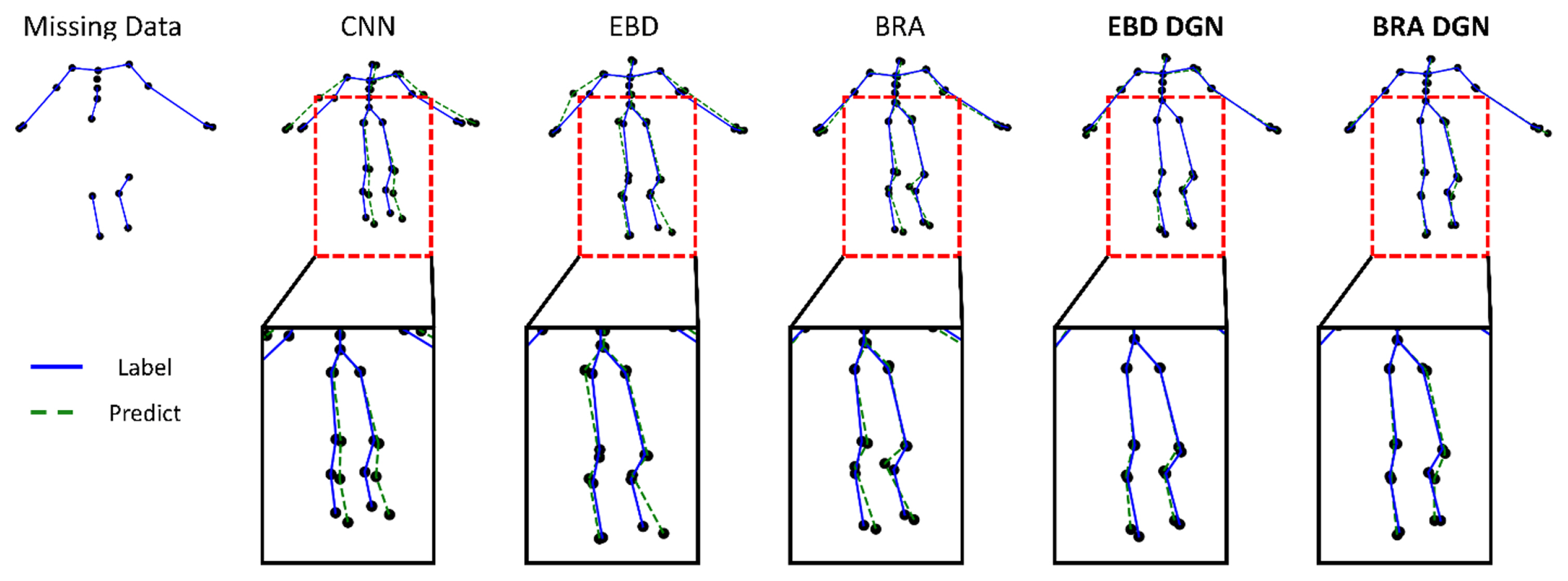

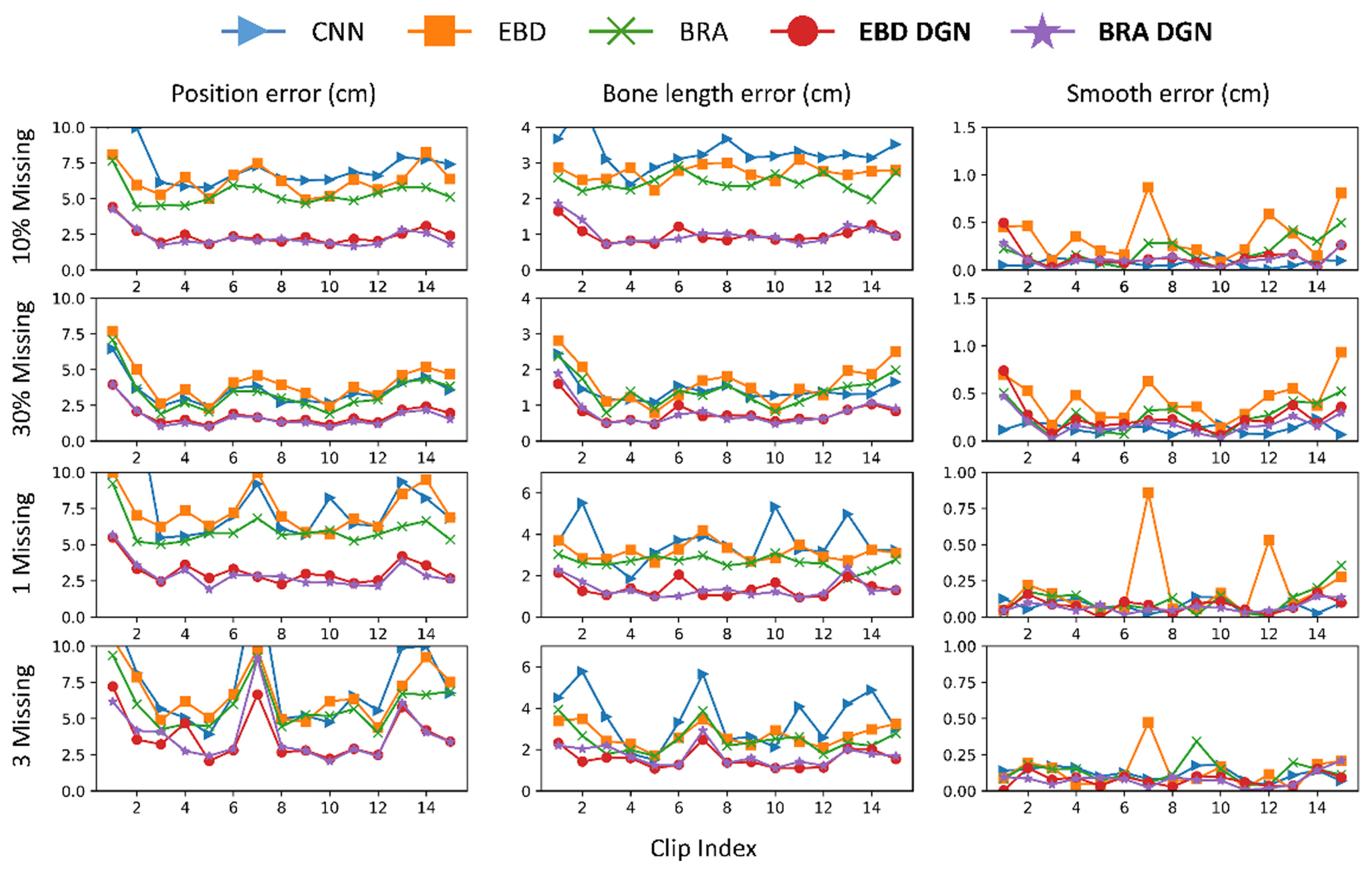

In all three experiments, the model’s performance was evaluated by position, bone length, and smooth errors. The position error is the most critical because we aim to refine incomplete data. If the position error is reduced, the bone length error is reduced too; the smooth error is also present in the clean data because it represents the difference in the positions of the joints between neighboring frames, and it is desirable for the smooth loss of the refined data to have a similar value with that of the clean data. Hence, we represented the smooth error by the difference between the smooth losses of the clean and refined data. For cross-validation, we randomly used 70% of the CMU mocap data for training and the remainder for testing. The results of the first experiment are shown in Fig. 7 as an example. We can qualitatively evaluate the model’s performance from Fig. 7. Compared with other models, our proposed model (BRA DGN), showed the best performance in terms of the position and bone length losses. Although other models’ predicted node positions and, especially, bone lengths show a big difference from those of the label, BRA DGN produced a prediction almost similar to the label. Such an outstanding result of BRA DGN was possible because it not only reflects each loss but also considers the kinematic dependency of human structure using the directed graph.

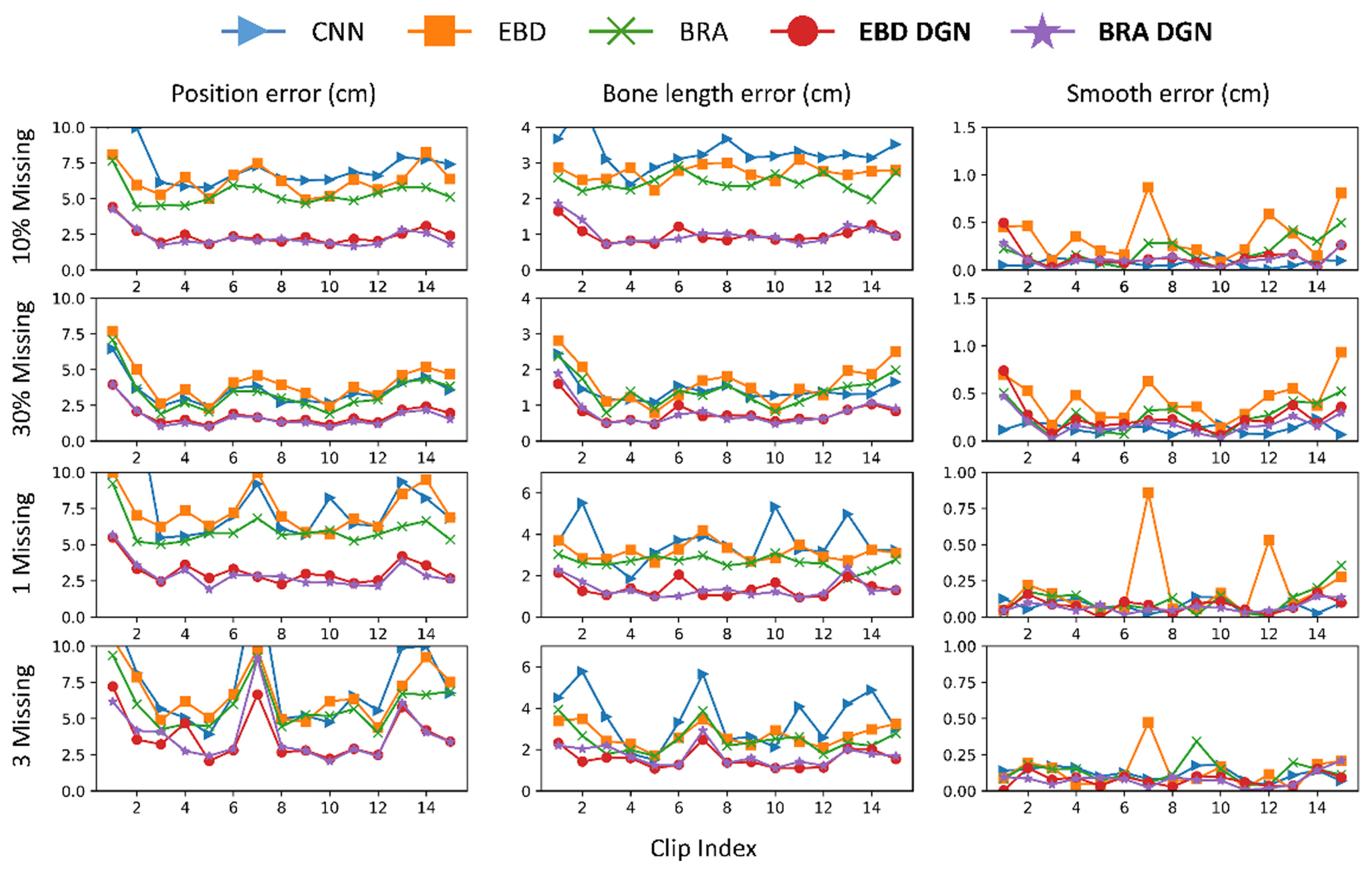

5.2.1 Missing Marker Reconstruction

We need to evaluate the model’s performance when the missing types of the training and test data differ because we do not know how much of the actual measured data has been occluded. Therefore, we trained 40% random missing data without noise to evaluate the refinement performance of the model on missing data. Then, we tested the model on randomly missing data or data with few joints missing from the entire frame.

EBD DGN and BRA DGN showed the best performance in position and bone length errors, respectively ( Fig. 8 and Table 2). Hence, models representing 3D human structures as graphs effectively extract spatial information from data, and the data generated via these models show high visual quality ( Fig. 7). Moreover, the graph-based models generate the refined data with a similar smooth loss to that of the clean data. For the last two rows in Fig. 8 where the particular nodes are missing from all frames, other models that extract only temporal information; however, have to be refined with spatial information only, resulting in significant performance degradation.

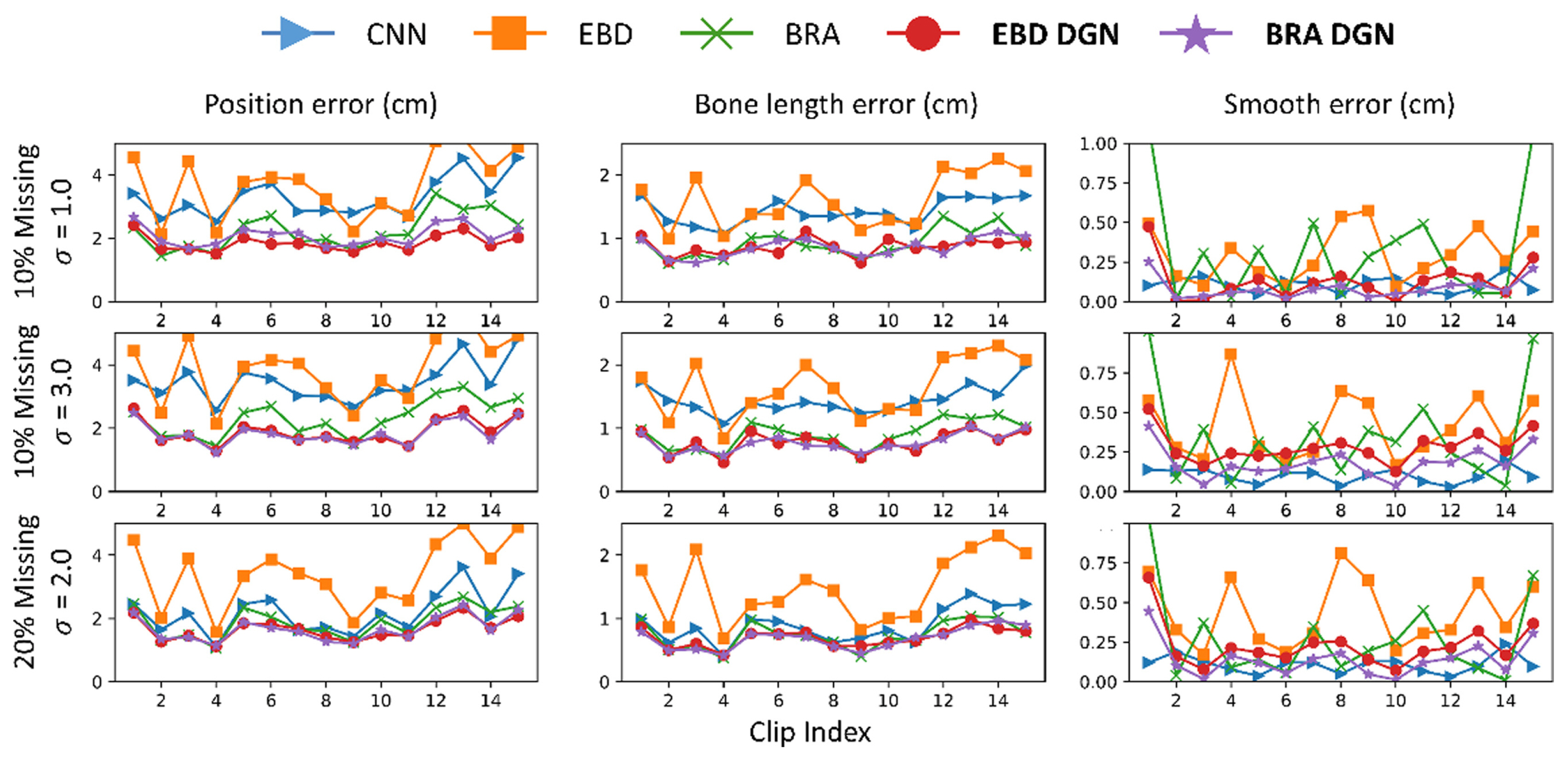

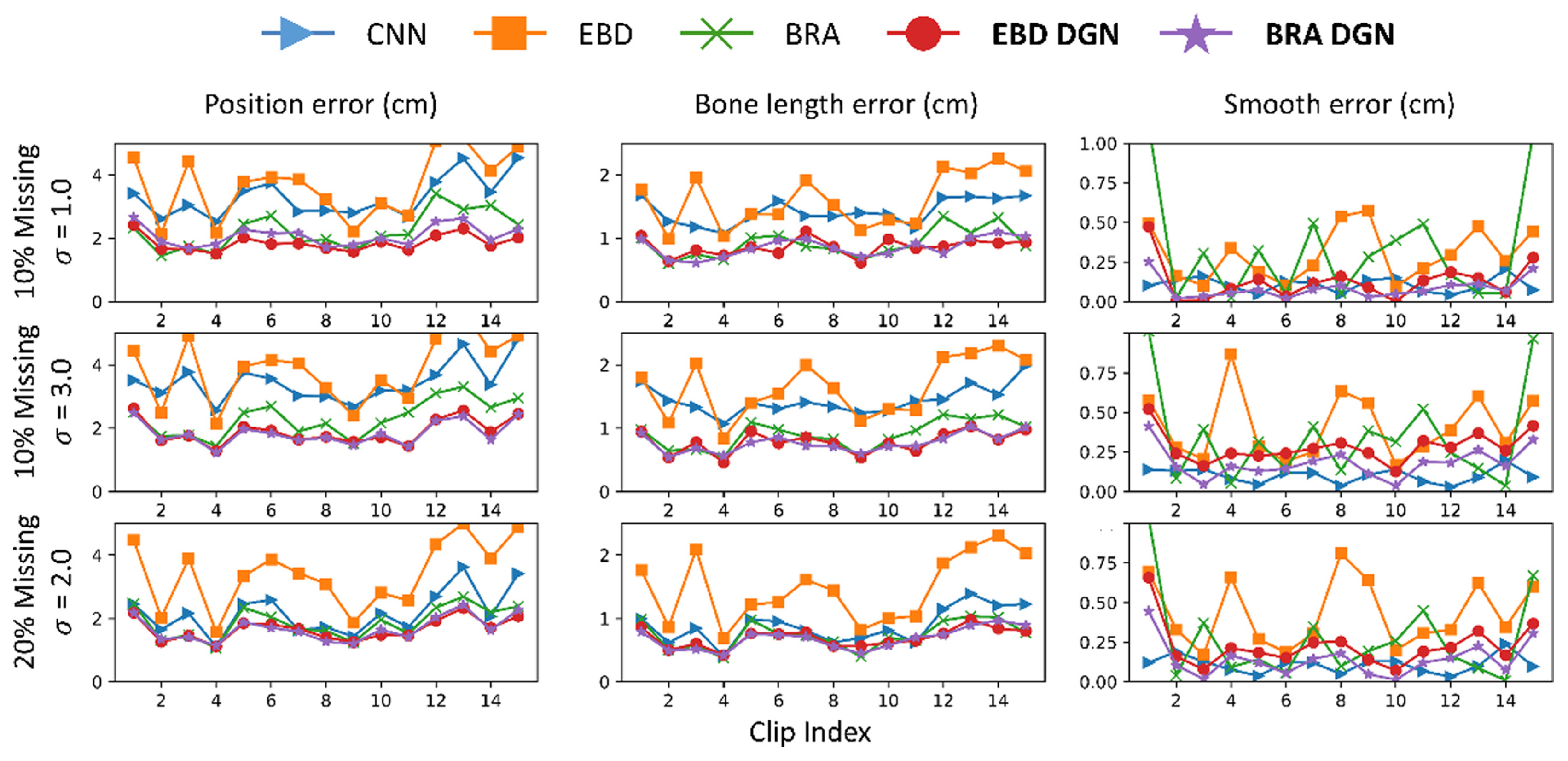

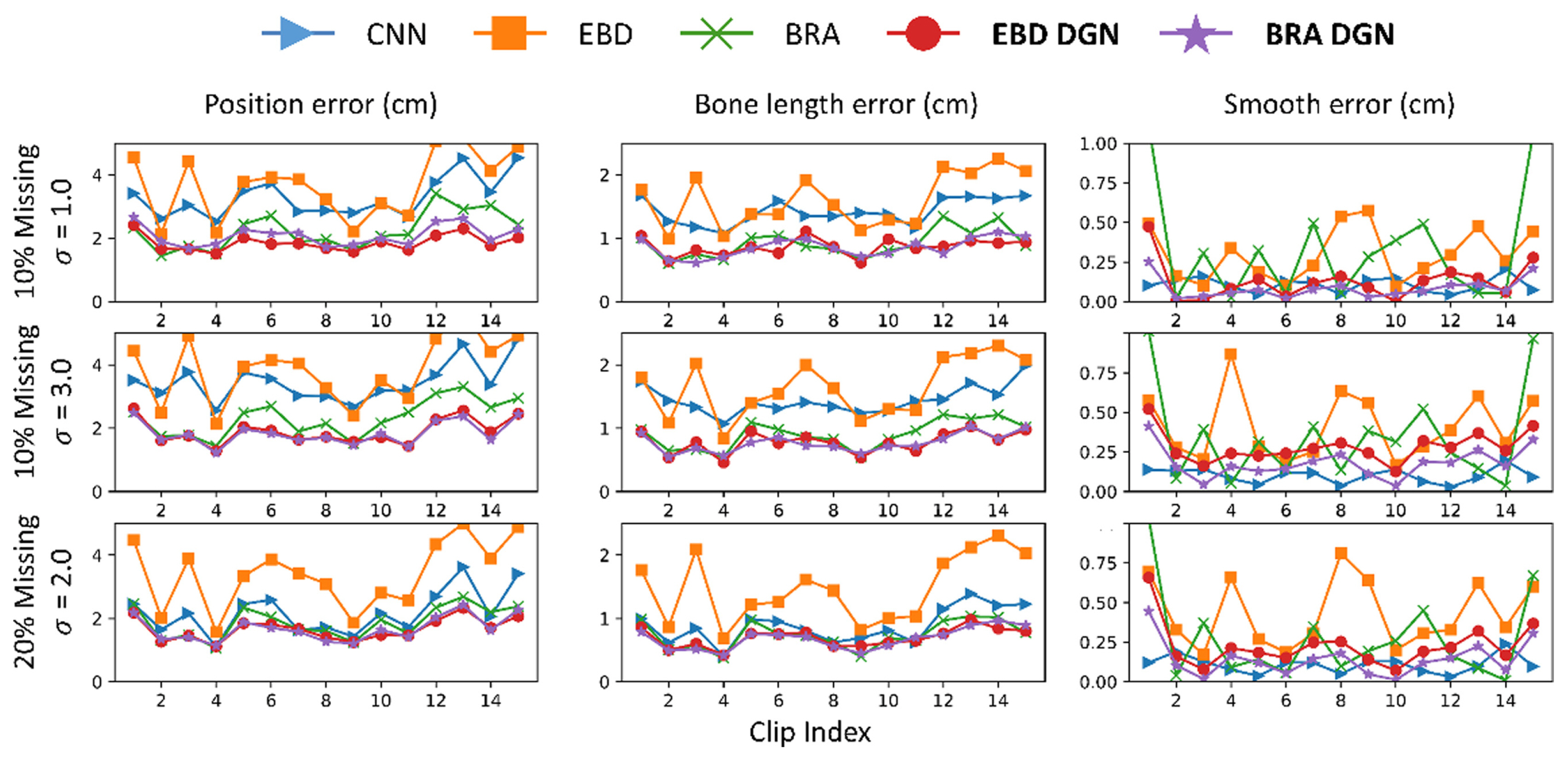

5.2.2 Missing Marker Reconstruction with Noise

In this subsection, we compare the refinement performance of models on data with both occlusion and noise. For the comparison, we train the models with 20% missing and 3-std noise data and test them with various types of incomplete data. The results are described in Fig. 9 and Table 3. BRA DGN and EBD DGN showed the best performance in the position and bone length errors, respectively. Although the test data differed significantly from the training data, they showed almost the same performance as before. However, BRA showed severe performance degradation as the difference between the training and test data increased, attributable to the failure of the FCL to extract the spatial information of the human body.

5.2.3 Missing Marker Reconstruction without Standardizing

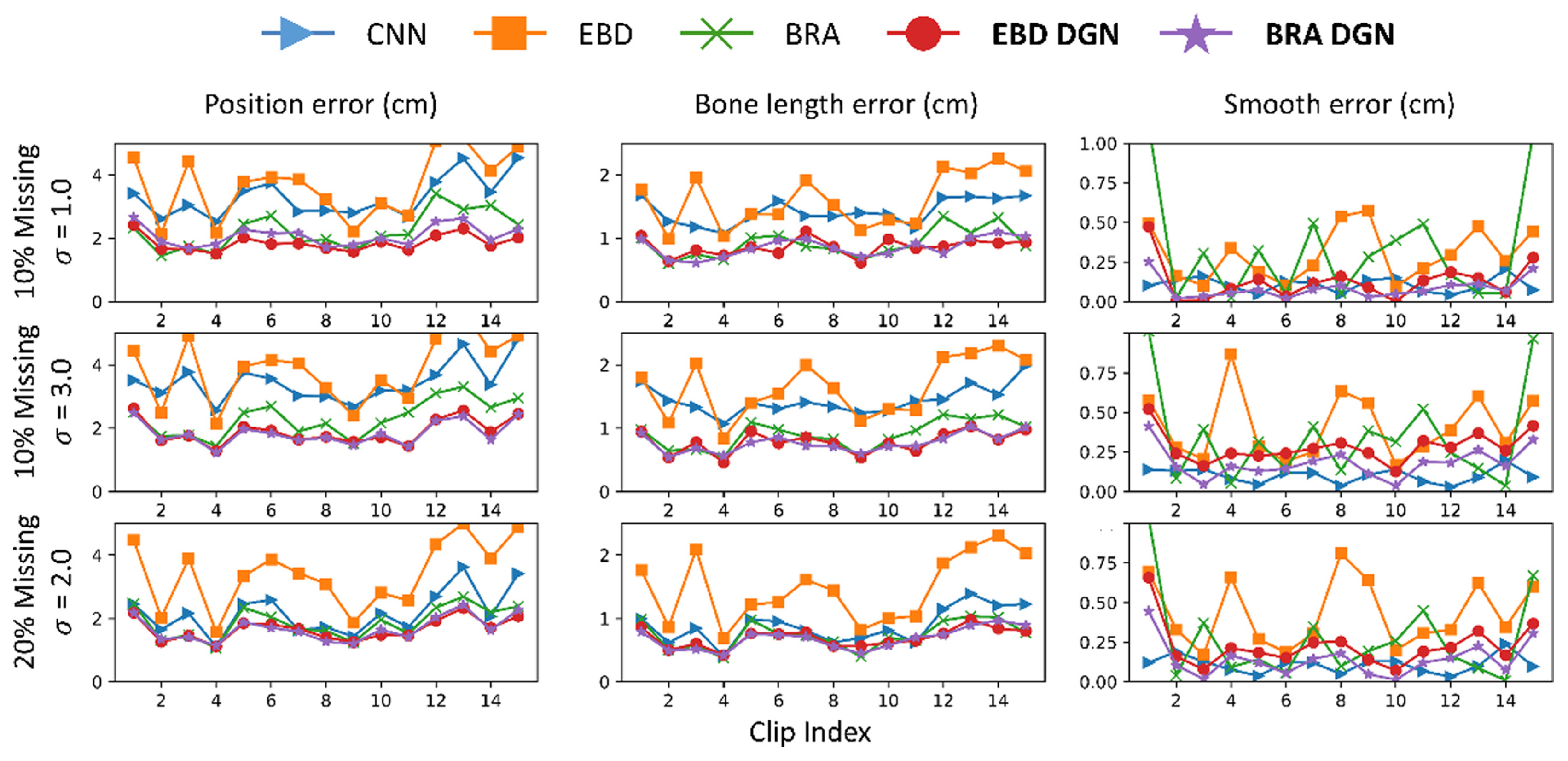

The preprocessing process is intended to help the model learn by making markers in a stochastically specific position. In Subsection 5.1, global rotation was removed to preprocess the 3D human data, which has a limitation that several joints must be measured to make the skeleton motion data look in a particular direction. However, if the model considers the spatial information based on GNN, it is possible to predict the missing joints without the aid of this preprocessing.

We trained the model with 40% missing ratio data without noise and then tested on various missing ratio data ( Fig. 10 and Table 4). Notably, the data used in this case was not rotated. The proposed model was unaffected by rotation, but other models were significantly affected because they failed to consider spatial information. Hence, the proposed model has the advantage of having fewer joints that must be measured to rotate not only the skeleton motion data but also any other data and reduce the time required to standardize the data.

5.3 Hyperparameters for Comparative Models

Finally, we experimented with varying the number of DGN blocks to find the optimal count by observing the training time and error across three types of loss. The training was conducted with 10% random missing data without Gaussian noise. The results, including the number of parameters for each model, training time, and each error are presented in Table 5. The total loss reached its minimum with the use of three DGN Blocks, with both position loss and smooth loss registering their lowest values at this configuration. However, performance started to decline when the number of DGN Blocks exceeded three, attributable to a rise in the number of parameters, which in turn led to overfitting on the training dataset. In addition, we ensured a fair experimental setting by comparing the parameters of each comparative model. The parameters for each model are outlined in Table 6. While CNN exhibits fewer parameters compared to other models, the remaining four models have nearly identical model parameters. Notably, the proposed BRA DGN model demonstrates superior performance despite having fewer parameters than the other models. This provides clear and indisputable evidence of the effectiveness of the proposed model.

6 Conclusion

Refining 3D skeleton motion data is an indispensable step in preprocessing motion data, particularly for inexpensive noisy motion capture devices. We propose a graph-based model that considers spatio-temporal information for refining 3D human data. An autoencoder comprising FCLs has been used in previous studies for human data refinement, but the positions of all joints are considered to predict that of missing markers. However, the incomplete joint is only affected by the neighboring joints in the human structure, whereas the distant joints are irrelevant for refinement. To preserve the kinematic dependency of the human structure, we represent the human structure as a graph by expressing joints as nodes and bones as edges. Because the joints far from the center are affected by the joint near to the center, we used an adaptive directed GNN to extract the spatial information. In addition, we used an LSTM layer for the hidden units projected from the encoder to extract temporary information. To verify the superiority of the proposed model, we trained and tested the model in three manners: missing marker reconstruction, missing marker reconstruction with noise, and missing marker reconstruction without standardizing. Comparing the refinement performance of our model with those of others, that of our model was the best, and our model effectively extracted the spatial information of the human body.

Acknowledgement(s)

This research was financially supported by the Institute of Civil Military Technology Cooperation funded by the Defense Acquisition Program Administration and Ministry of Trade, Industry and Energy of Korean government under grant No. 19-CM-GU-01.

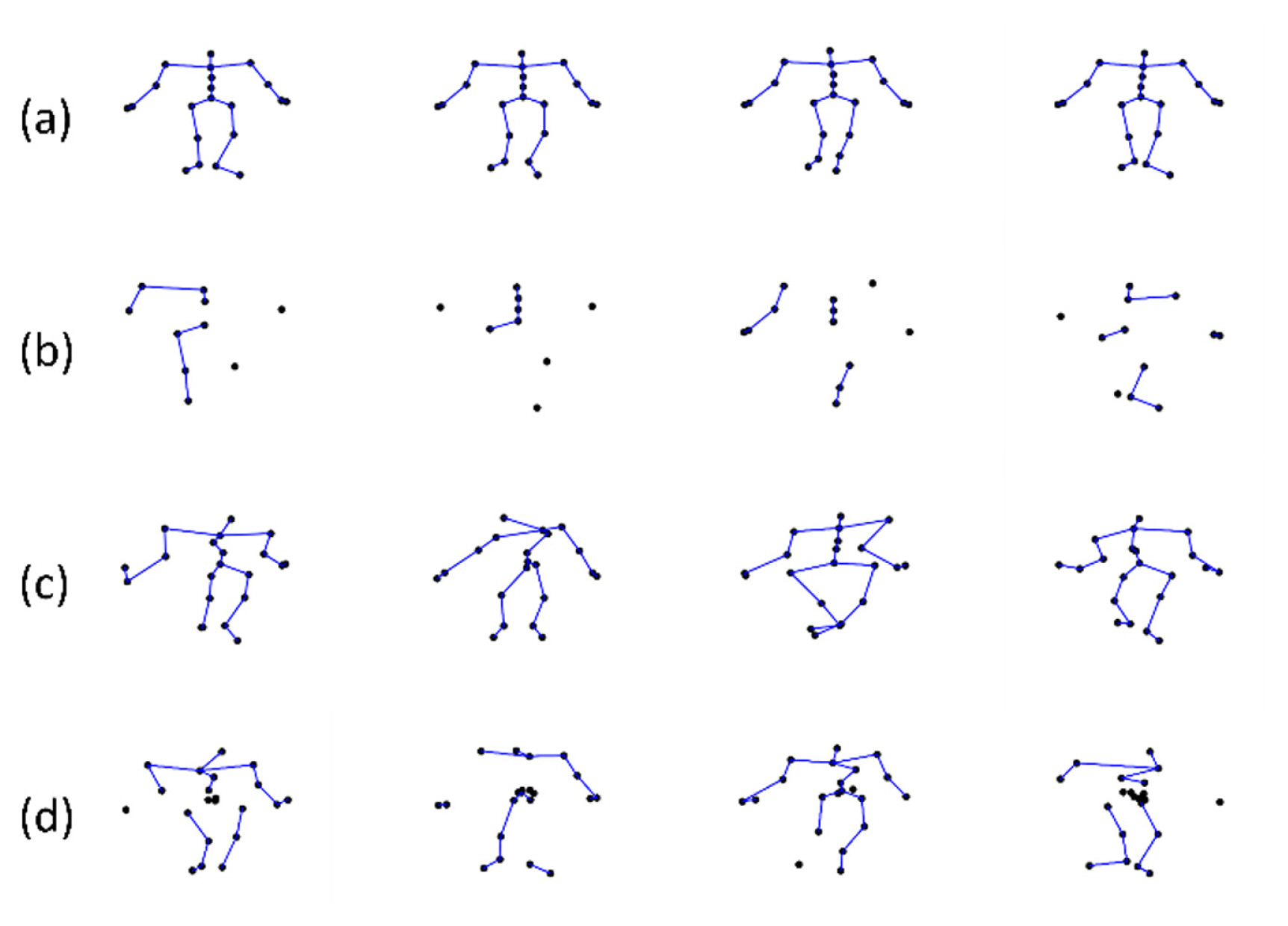

Fig. 1

Illustration of types of incomplete data: (a) clean data, (b) data with 40% missing ratio, (c) data with 5-std Gaussian noise, and (d) coexisting data with 20% missing ratio and 3-std Gaussian noise

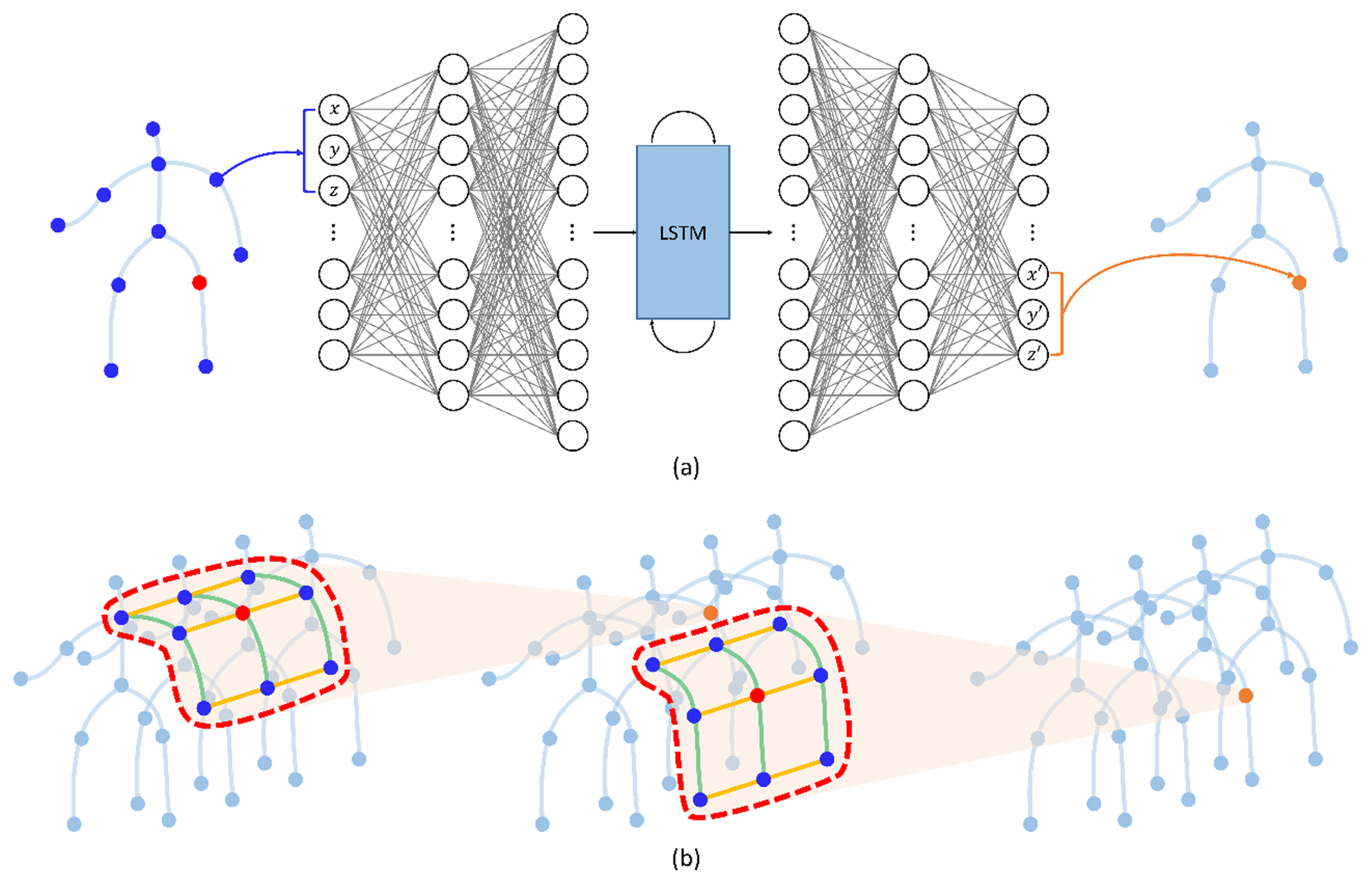

Fig. 2

The process of updating a marker according to an FCL (a) and our desired result (b). The blue joints are used to update the orange node. The FCL can act as noise because it uses information from all unrelated joints to predict missing joints. Intuitively, the missing marker is significantly affected by the positions of the surrounding joints and bones. Such intuition makes a graph NN (GNN) that predicts missing markers using the surrounding joints and bones information (in the red curves) spatially and temporally. The green and yellow lines represent the spatial and temporal connectivities, respectively

Fig. 3

The architecture of the proposed model: BRA DGN

Fig. 4

Illustration of the graph construction for CMU mocap dataset using 21 joints

Fig. 5

Illustration of updating node and edge in a graph: (a) an original directed graph, (b) updating the green node using incoming orange edge and outgoing red edges. (c) updating the green edge using the orange source node and red target node

Fig. 6

Types of error between a predicted and clean position of the markers: (a) position error, (b) bone length error, and (c) smooth error. The black and green dots represent the label and predicted joints, respectively. The red lines in (a) represent the distance error, and the red circles in (b) and (c) represent the points at the same L2 distance from the label position

Fig. 7

Qualitative illustration of the result of the missing marker reconstruction with a 40% missing ratio

Fig. 8

Performance comparison of models trained with 40% randomly missing data

Fig. 9

Performance comparison of models trained with 20% randomly missing data with 3-std Gaussian noise

Fig. 10

Performance comparison of models trained with 40% randomly missing data without standardization

Table 1

|

Stage |

Epoch |

λ1

|

λ2

|

λ3

|

Freezing |

|

1 |

200 |

1.0 |

0.0001 |

- |

50 |

|

2 |

100 |

1.0 |

0.0001 |

0.0003 |

0 |

|

3 |

100 |

1.0 |

0.0005 |

0.0005 |

0 |

Table 2

Comparison of qualitative experimental results on 40% randomly missing data

|

Noise Type |

CNN |

EBD |

BRA |

EBD DGN |

BRA DGN |

|

Missing 10% |

6.817±0.351 (P) |

1.594±0.140 (P) |

1.115±0.133 (P) |

1.063±0.087 (P) |

0.898±0.006 (P) |

|

2.759±0.271 (B) |

0.704±0.061 (B) |

0.494±0.054 (B) |

0.457±0.037 (B) |

0.361±0.017 (B) |

|

1.183±0.175 (J) |

0.279±0.112 (J) |

0.113±0.013 (J) |

0.090±0.002 (J) |

0.083±0.003 (J) |

|

Missing 20% |

2.494±0.008 (P) |

1.294±0.121 (P) |

0.956±0.108 (P) |

0.846±0.049 (P) |

0.708±0.009 (P) |

|

1.077±0.028 (B) |

0.560±0.035 (B) |

0.414±0.033 (B) |

0.381±0.037 (B) |

0.294±0.009 (B) |

|

0.316±0.036 (J) |

0.249±0.048 (J) |

0.108±0.010 (J) |

0.107±0.004 (J) |

0.083±0.001 (J) |

|

Missing 30% |

1.665±0.008 (P) |

1.176±0.113 (P) |

0.900±0.095 (P) |

0.777±0.021 (P) |

0.653±0.014 (P) |

|

0.781±0.006 (B) |

0.501±0.025 (B) |

0.384±0.023 (B) |

0.351±0.037 (B) |

0.272±0.010 (B) |

|

0.116±0.002 (J) |

0.244±0.043 (J) |

0.106±0.007 (J) |

0.120±0.007 (J) |

0.083±0.001 (J) |

|

Missing 40% |

1.503±0.011 (P) |

1.161±0.108 (P) |

0.905±0.094 (P) |

0.780±0.033 (P) |

0.653±0.015 (P) |

|

0.729±0.004 (B) |

0.492±0.021 (B) |

0.381±0.023 (B) |

0.347±0.035 (B) |

0.273±0.014 (B) |

|

0.106±0.001 (J) |

0.238±0.037 (J) |

0.105±0.006 (J) |

0.133±0.008 (J) |

0.083±0.000 (J) |

|

Missing 1 Joint |

12.672±1.105 (P) |

2.466±0.292 (P) |

1.923±0.199 (P) |

2.012±0.162 (P) |

1.850±0.128 (P) |

|

5.444±0.854 (B) |

1.025±0.090 (B) |

0.849±0.078 (B) |

0.782±0.003 (B) |

0.701±0.029 (B) |

|

0.673±0.119 (J) |

0.200±0.043 (J) |

0.142±0.027 (J) |

0.067±0.002 (J) |

0.090±0.004 (J) |

|

Missing 2 Joint |

8.099±0.277 (P) |

2.802±0.376 (P) |

2.340±0.211 (P) |

2.308±0.154 (P) |

2.148±0.108 (P) |

|

3.557±0.294 (B) |

1.137±0.123 (B) |

1.002±0.071 (B) |

0.914±0.018 (B) |

0.834±0.021 (B) |

|

0.366±0.065 (J) |

0.209±0.042 (J) |

0.150±0.029 (J) |

0.070±0.002 (J) |

0.090±0.006 (J) |

|

Missing 3 Joint |

6.453±0.152 (P) |

3.251±0.455 (P) |

2.844±0.228 (P) |

2.677±0.159 (P) |

2.525±0.104 (P) |

|

2.959±0.114 (B) |

1.266±0.144 (B) |

1.167±0.066 (B) |

1.054±0.026 (B) |

0.975±0.022 (B) |

|

0.220±0.034 (J) |

0.218±0.043 (J) |

0.160±0.029 (J) |

0.072±0.002 (J) |

0.090±0.003 (J) |

Table 3

Comparison of qualitative experimental results on 20% randomly missing data with 3-std Gaussian noise

|

Noise Type |

CNN |

EBD |

BRA |

EBD DGN |

BRA DGN |

Missing 10%

Gaussian σ = 1 |

2.038±0.057 (P) |

1.421±0.027 (P) |

1.352±0.057 (P) |

1.328±0.087 (P) |

1.275±0.085 (P) |

|

0.910±0.049 (B) |

0.568±0.026 (B) |

0.542±0.018 (B) |

0.575±0.104 (B) |

0.488±0.018 (B) |

|

0.151±0.006 (J) |

0.232±0.028 (J) |

0.176±0.018 (J) |

0.090±0.001 (J) |

0.093±0.001 (J) |

Missing 10%

Gaussian σ = 2 |

2.033±0.0.39 (P) |

1.413±0.037 (P) |

1.325±0.060 (P) |

1.191±0.024 (P) |

1.151±0.050 (P) |

|

0.902±0.048(B) |

0.555±0.033 (B) |

0.512±0.019 (B) |

0.475±0.028 (B) |

0.413±0.012 (B) |

|

0.166±0.008 (J) |

0.230±0.024 (J) |

0.166±0.014 (J) |

0.096±0.002 (J) |

0.090±0.001 (J) |

Missing 10%

Gaussian σ = 3 |

2.163±0.017 (P) |

1.480±0.060 (P) |

1.385±0.048 (P) |

1.229±0.006 (P) |

0.825±0.352 (P) |

|

0.934±0.042 (B) |

0.559±0.037 (B) |

0.515±0.018 (B) |

0.448±0.007 (B) |

0.382±0.002 (B) |

|

0.190±0.016 (J) |

0.239±0.024 (J) |

0.165±0.014 (J) |

0.113±0.003 (J) |

0.087±0.000 (J) |

Missing 20%

Gaussian σ = 1 |

1.736±0.046 (P) |

1.341±0.032 (P) |

1.283±0.052 (P) |

1.159±0.032 (P) |

1.148±0.071 (P) |

|

0.823±0.036 (B) |

0.524±0.023 (B) |

0.489±0.020 (B) |

0.467±0.038 (B) |

0.420±0.022 (B) |

|

0.106±0.001 (J) |

0.234±0.026 (J) |

0.162±0.018 (J) |

0.100±0.002 (J) |

0.091±0.001 (J) |

Missing 20%

Gaussian σ = 2 |

1.703±0.036 (P) |

1.356±0.030 (P) |

1.282±0.048 (P) |

1.117±0.004 (P) |

1.083±0.042 (P) |

|

0.802±0.038 (B) |

0.518±0.026 (B) |

0.484±0.018 (B) |

0.414±0.011 (B) |

0.372±0.012 (B) |

|

0.103±0.001 (J) |

0.235±0.027 (J) |

0.157±0.024 (J) |

0.108±0.003 (J) |

0.090±0.000 (J) |

Missing 20%

Gaussian σ = 3 |

1.780±0.028 (P) |

1.436±0.043 (P) |

1.352±0.038 (P) |

1.219±0.013 (P) |

1.146±0.026 (P) |

|

0.810±0.042 (B) |

0.526±0.028 (B) |

0.486±0.018 (B) |

0.415±0.018 (B) |

0.359±0.001 (B) |

|

0.101±0.001 (J) |

0.244±0.029 (J) |

0.158±0.024 (J) |

0.128±0.004 (J) |

0.087±0.000 (J) |

Table 4

Comparison of qualitative experimental results on 40% randomly missing data without standardization

|

Noise Type |

CNN |

EBD |

BRA |

EBD DGN |

BRA DGN |

|

Missing 10% |

4.226±0.052 (P) |

2.269±0.298 (P) |

1.711±0.079 (P) |

1.961±0.029 (P) |

1.426±0.028 (P) |

|

1.794±0.049 (B) |

0.891±0.146 (B) |

0.693±0.040 (B) |

0.721±0.016 (B) |

0.530±0.005 (B) |

|

0.082±0.002 (J) |

0.363±0.039 (J) |

0.222±0.034 (J) |

0.179±0.007 (J) |

0.069±0.001 (J) |

|

Missing 20% |

3.217±0.026 (P) |

1.933±0.225 (P) |

1.491±0.040 (P) |

1.430±0.046 (P) |

1.038±0.021 (P) |

|

1.372±0.018 (B) |

0.762±0.104 (B) |

0.605±0.024 (B) |

0.556±0.013 (B) |

0.415±0.007 (B) |

|

0.076±0.000 (J) |

0.353±0.018 (J) |

0.190±0.025 (J) |

0.203±0.012 (J) |

0.078±0.002 (J) |

|

Missing 30% |

2.589±0.052 (P) |

1.770±0.163 (P) |

1.418±0.041 (P) |

1.248±0.040 (P) |

0.976±0.024 (P) |

|

1.115±0.006 (B) |

0.694±0.063 (B) |

0.568±0.017 (B) |

0.498±0.005 (B) |

0.395±0.010 (B) |

|

0.076±0.001 (J) |

0.342±0.009 (J) |

0.173±0.017 (J) |

0.222±0.013 (J) |

0.090±0.002 (J) |

|

Missing 40% |

2.428±0.051 (P) |

1.758±0.146 (P) |

1.429±0.040 (P) |

1.228±0.042 (P) |

1.028±0.018 (P) |

|

1.086±0.004 (B) |

0.697±0.055 (B) |

0.556±0.016 (B) |

0.490±0.007 (B) |

0.417±0.009 (B) |

|

0.079±0.001 (J) |

0.335±0.007 (J) |

0.164±0.020 (J) |

0.239±0.013 (J) |

0.103±0.003 (J) |

Table 5

Comparisons for number of DGN blocks for BRA DGN

|

DGN Block |

Params. |

Training Time |

Total Error |

Error |

|

2 Blocks |

3,446k |

14.76 |

1.178 |

0.664 (P) |

|

0.250 (B) |

|

0.264 (J) |

|

3 Blocks |

3,451k |

20.50 |

1.173 |

0.658 (P) |

|

0.262 (B) |

|

0.253 (J) |

|

4 Blocks |

3,458k |

22.11 |

1.304 |

0.767 (P) |

|

0.274 (B) |

|

0.263 (J) |

|

5 Blocks |

3,463k |

24.31 |

1.296 |

0.755 (P) |

|

0.276 (B) |

|

0.265 (J) |

Table 6

Model parameters for each comparative model

|

CNN |

EBD |

BRA |

EBD DGN |

BRA DGN |

|

Params. |

807k |

3,502k |

3,502k |

3,451k |

3,451k |

References

1. Jun, K., Lee, D.-W., Lee, K., Lee, S. & Kim, M. S. (2020). Feature extraction using an RNN autoencoder for skeleton-based abnormal gait recognition. IEEE Access, 8, 19196–19207.  2. Sahak, R., Zakaria, N. K.Tahir, N. M.Yassin, A. I. M. & Jailani, R. (2019). Review on current methods of gait analysis and recognition using Kinect. In: Proceedings of the 2019 IEEE 15th International Colloquium on Signal Processing & Its Applications (CSPA); pp 229–234.  3. Wang, L., (2006). Abnormal walking gait analysis using silhouette-masked flow histograms. In: Proceedings of the 18th International Conference on Pattern Recognition (ICPR’06); pp 473–476.

4. Yahya, M., Shah, J. A., Kadir, K. A., Yusof, Z. M., Khan, S. & Warsi, A. (2019). Motion capture sensing techniques used in human upper limb motion: A review. Sensor Review, 39(4), 504–511.  5. Li, S., Zhou, Y., Zhu, H., Xie, W., Zhao, Y. & Liu, X. (2019). Bidirectional recurrent autoencoder for 3D skeleton motion data refinement. Computers & Graphics, 81, 92–103.  6. Chakraborty, B. K., Sarma, D., Bhuyan, M. K. & MacDorman, K. F. (2018). Review of constraints on vision?based gesture recognition for human–computer interaction. IET Computer Vision, 12(1), 3–15.   7. Guo, S., Southern, R., Chang, J., Greer, D. & Zhang, J. J. (2015). Adaptive motion synthesis for virtual characters: A survey. The Visual Computer, 31, 497–512.   8. Field, M., Pan, Z., Stirling, D. & Naghdy, F. (2011). Human motion capture sensors and analysis in robotics. Industrial Robot: An International Journal, 38(2), 163–171.  9. Khatib, O., Demircan, E., De Sapio, V., Sentis, L., Besier, T. & Delp, S. (2009). Robotics-based synthesis of human motion. Journal of Physiology-Paris, 103(3–5), 211–219.    10. Pollard, N. S., Hodgins, J. K.Riley, M. J. & Atkeson, C. G. (2002). Adapting human motion for the control of a humanoid robot. In: Proceedings 2002 IEEE International Conference on Robotics and Automation (Cat. No. 02CH37292); pp 1390–1397.  11. Zhu, Y., (2020). Reconstruction of missing markers in motion capture based on deep learning. In: Proceedings of the 2020 IEEE 3rd International Conference on Information Systems and Computer Aided Education (ICISCAE); pp 346–349.  12. Kong, Y., & Fu, Y. (2022). Human action recognition and prediction: A survey. International Journal of Computer Vision, 130(5), 1366–1401.   13. Koohzadi, M., & Charkari, N. M. (2017). Survey on deep learning methods in human action recognition. IET Computer Vision, 11(8), 623–632.   14. Li, M., Chen, S.Chen, X.Zhang, Y.Wang, Y. & Tian, Q. (2019). Actional-structural graph convolutional networks for skeleton-based action recognition. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition; pp 3595–3603.  15. Shi, L., Zhang, Y.Cheng, J. & Lu, H. (2019). Skeleton-based action recognition with directed graph neural networks. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition; pp 7912–7921.  16. Shi, L., Zhang, Y.Cheng, J. & Lu, H. (2019). Two-stream adaptive graph convolutional networks for skeleton-based action recognition. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition; pp 12026–12035.  19. Mall, U., Lal, G. R., Chaudhuri, S. & Chaudhuri, P. (2017). A deep recurrent framework for cleaning motion capture dataarXiv preprint arXiv:1712.03380.

20. Fieraru, M., Khoreva, A.Pishchulin, L. & Schiele, B. (2018). Learning to refine human pose estimation. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshops; pp 205–214.  21. Moon, G., Chang, J. Y. & Lee, K. M. (2019). Posefix: Model-agnostic general human pose refinement network. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition; pp 7773–7781.  22. Tome, D., Toso, M.Agapito, L. & Russell, C. (2018). Rethinking pose in 3D: Multi-stage refinement and recovery for markerless motion capture. In: Proceedingse of the 2018 International Conference on 3D Vision (3DV; pp 474–483.  23. Lou, H., & Chai, J. (2010). Example-based human motion denoising. IEEE Transactions on Visualization and Computer Graphics, 16(5), 870–879.  24. Xiao, J., Feng, Y., Ji, M., Yang, X., Zhang, J. J. & Zhuang, Y. (2015). Sparse motion bases selection for human motion denoising. Signal Processing, 110, 108–122.  25. Boulch, A., Guerry, J., Le Saux, B. & Audebert, N. (2018). SnapNet: 3D point cloud semantic labeling with 2D deep segmentation networks. Computers & Graphics, 71, 189–198.  26. Le, T., Bui, G. & Duan, Y. (2017). A multi-view recurrent neural network for 3D mesh segmentation. Computers & Graphics, 66, 103–112.  27. Luciano, L., & Hamza, A. B. (2019). A global geometric framework for 3D shape retrieval using deep learning. Computers & Graphics, 79, 14–23.  28. Wang, X., Zhou, B., Zhang, Y. & Zhao, Y. (2018). Deep style estimator for 3D indoor object collection organization and scene synthesis. Computers & Graphics, 74, 76–84.  29. Holden, D., Saito, J. & Komura, T. (2016). A deep learning framework for character motion synthesis and editing. ACM Transactions on Graphics (TOG), 35(4), 1–11.  30. Holden, D., Saito, J.Komura, T. & Joyce, T. (2015). Learning motion manifolds with convolutional autoencoders. In: Proceedings of the SIGGRAPH Asia 2015 Technical Briefs; pp 1–4.  31. Butepage, J., Black, M. J.Kragic, D. & Kjellstrom, H. (2017). Deep representation learning for human motion prediction and classification. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition; pp 6158–6166.  32. Holden, D., Komura, T. & Saito, J. (2017). Phase-functioned neural networks for character control. ACM Transactions on Graphics (TOG), 36(4), 1–13.  33. Wang, Y., Che, W. & Xu, B. (2017). Encoder–decoder recurrent network model for interactive character animation generation. The Visual Computer, 33, 971–980.   34. Memory, LS-T, (2010). Long short-term memory. Neural Computation, 9(8), 1735–1780.   35. Schuster, M., & Paliwal, K. K. (1997). Bidirectional recurrent neural networks. IEEE Transactions on Signal Processing, 45(11), 2673–2681.  36. Li, S.-J., Zhu, H.-S., Zheng, L.-P. & Li, L. (2020). A perceptual-based noise-agnostic 3D skeleton motion data refinement network. IEEE Access, 8, 52927–52940.  38. Bruderlin, A., & Williams, L. (1995). Motion signal processing. In: Proceedings of the 22nd Annual Conference on Computer Graphics and Interactive Techniques; pp 97–104.  39. Lee, J., & Shin, S. Y. (2002). General construction of time-domain filters for orientation data. IEEE Transactions on Visualization and Computer Graphics, 8(2), 119–128.  40. Shin, H. J., Lee, J., Shin, S. Y. & Gleicher, M. (2001). Computer puppetry: An importance-based approach. ACM Transactions on Graphics (TOG), 20(2), 67–94.

41. Li, L., McCann, J.Pollard, N. S. & Faloutsos, C. (2009). Dynammo: Mining and summarization of coevolving sequences with missing values. In: Proceedings of the 15th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining; pp 507–516.

42. Lai, R. Y., Yuen, P. C. & Lee, K. K. (2011). Motion capture data completion and denoising by singular value thresholding. Eurographics (Short papers) (pp. 45–48.

43. Burke, M., & Lasenby, J. (2016). Estimating missing marker positions using low dimensional Kalman smoothing. Journal of Biomechanics, 49(9), 1854–1858.   44. Akhter, I., Simon, T., Khan, S., Matthews, I. & Sheikh, Y. (2012). Bilinear spatiotemporal basis models. ACM Transactions on Graphics (TOG), 31(2), 1–12.  45. Xiao, J., Feng, Y. & Hu, W. (2011). Predicting missing markers in human motion capture using l1?sparse representation. Computer Animation and Virtual Worlds, 22(2?3), 221–228.  46. Xia, G., Sun, H., Zhang, G. & Feng, L. (2016). Human motion recovery jointly utilizing statistical and kinematic information. Information Sciences, 339, 189–205.  47. Li, J., Xiao, D., Li, K. & Li, J. (2021). Graph matching for marker labeling and missing marker reconstruction with bone constraint by LSTM in optical motion capture. IEEE Access, 9, 34868–34881.  48. Kingma, D. P., & Ba, J. (2014). Adam: A method for stochastic optimizationarXiv preprint arXiv:1412.6980.

49. Feng, Y., Xiao, J., Zhuang, Y., Yang, X., Zhang, J. J. & Song, R. (2014). Exploiting temporal stability and low-rank structure for motion capture data refinement. Information Sciences, 277, 777–793.  50. Selvaraj, V., & Min, S. (2023). AI-assisted monitoring of human-centered assembly: A comprehensive review. International Journal of Precision Engineering Manufacturing-Smart Technology, 1(2), 201–218.   51. Kim, H., Quan, Y.-J., Jung, G., Lee, K.-W., Jeong, S., Yun, W.-J., Park, S. & Ahn, S.-H. (2023). Smart factory transformation using industry 4.0 toward ESG perspective: A critical review and future direction. International Journal of Precision Engineering Manufacturing-Smart Technology, 1(2), 165–185.

Biography

Changyun Choi received the M.S. degree in Mechanical Engineering from Pohang University of Science and Technology (POSTECH), Republic of Korea, in 2021. He has been a Data Scientist with Minds and Company, Republic of Korea, since 2019. His research interests include the application of artificial intelligence in assembly sequence planning in the automobile industry, reinforcement learning, autonomous car, and digital signal processing.

Biography

Jongmok Lee received the B.S. degree from Pohang University of Science and Technology (POSTECH), Republic of Korea, in 2021. He is currently pursuing the M.S./Ph.D. degree with the Industrial Artificial Intelligence Laboratory, POSTECH, Republic of Korea. His research interests include artificial intelligence (AI) with physics-informed neural networks and graph neural networks.

Biography

Hyun-Joon Chung received M.S. and Ph.D degrees in mechanical engineering from the University of Lowa City, USA, in 2005 and 2009, respectively. He was a Research Assistant and Postdoctoral Research Scholar in the Center for Computer Aided Design, from 2005 to 2015. He joined the Korea Institute of Robotics and Technology Convergence as a Senior Researcher in 2015. He is currently a Principal Researcher and the Head of AI Robotics Center in Korea Institute of Robotics and Technology Convergence. He serves as a General Affairs Director of Field Robot Society in Korea. His research interests include dynamics and control, optimization algorithms, computational decision making, modeling and simulation, and robotics.

Biography

Jaejung Park received the B.S. degree from Pohang University of Science and Technology (POSTECH), Republic of Korea, in 2021. He is currently pursuing the M.S./Ph.D. degree with the Industrial Artificial Intelligence Laboratory, POSTECH, Republic of Korea. His research interests include the application of artificial intelligence (AI) in material science and mechanical systems.

Biography

Bumsoo Park received his B.S. and M.S in Mechanical Engineering from the Ulsan National Institute of Science and Technology (UNIST), and Ph.D. in Mechanical Engineering from the Rensselaer Polytechnic Institute (RPI), Troy, NY, USA. He is currently a post-doctoral researcher at the Korea Advanced Institute of Science and Technology (KAIST). His research interests include the development of learning control algorithms for mechanical systems with complex dynamics, and deep learning applications to mechanical systems.

Biography

Seokman Sohn received his B.S. in Mechanical Engineering from Yonsei University in 1993. He then received his M.S. degree from Korea Advanced Institute of Science and Technology in 1995. He had researched the diagnosis of rotating machinery. He is currently a leader in worker safety technology team of Korea Electric Power Research Institute at KEPCO. His major research field is the worker safety technology development.

Biography

Seungchul Lee received the B.S. degree in Mechanical and Aerospace Engineering from Seoul National University, Seoul, Republic of Korea; the M.S. and Ph.D. degrees in Mechanical Engineering from the University of Michigan, Ann Arbor, MI, USA, in 2008 and 2010, respectively. He has been an Associate Professor with the Department of Mechanical Engineering, Korea Advanced Institute of Science and Technology, since 2023. His research focuses on industrial artificial intelligence for mechanical systems, smart manufacturing, materials, and healthcare. He extends his research work to both knowledge-guided AI and AI-driven knowledge discovery.

|

|

| TOOLS |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

METRICS

|

|

|

|

|

|

E-mail

E-mail Print

Print facebook

facebook twitter

twitter Linkedin

Linkedin google+

google+

PDF Links

PDF Links PubReader

PubReader Full text via DOI

Full text via DOI Download Citation

Download Citation  CrossRef TDM

CrossRef TDM